Добавить любой RSS - источник (включая журнал LiveJournal) в свою ленту друзей вы можете на странице синдикации.

Исходная информация - http://planet.mozilla.org/.

Данный дневник сформирован из открытого RSS-источника по адресу http://planet.mozilla.org/rss20.xml, и дополняется в соответствии с дополнением данного источника. Он может не соответствовать содержимому оригинальной страницы. Трансляция создана автоматически по запросу читателей этой RSS ленты.

По всем вопросам о работе данного сервиса обращаться со страницы контактной информации.

[Обновить трансляцию]

Daniel Stenberg: BearSSL is curl’s 14th TLS backend |

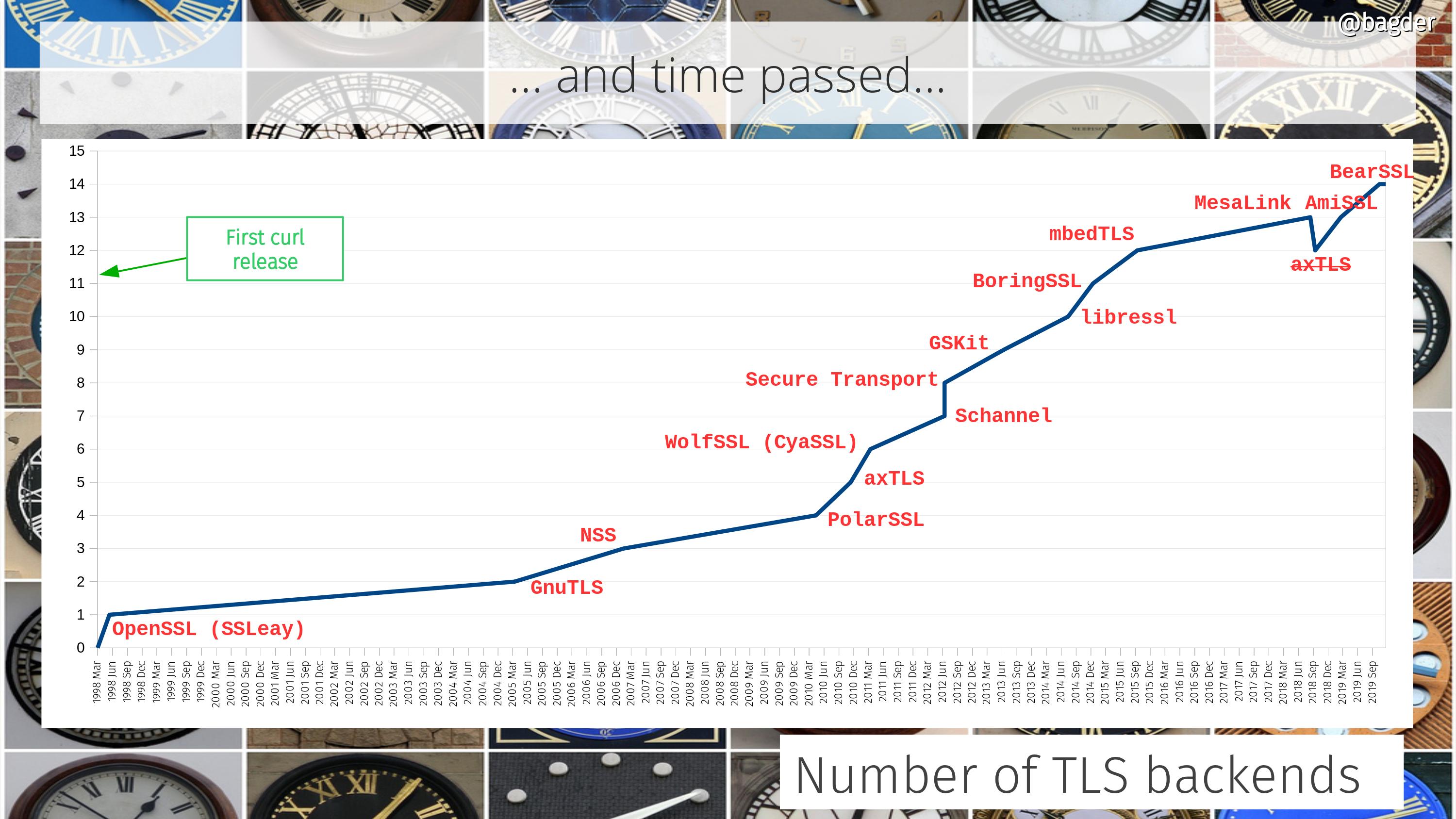

curl supports more TLS libraries than any other software I know of. The current count stops at 14 different ones that can be used to power curl’s TLS-based protocols (HTTPS primarily, but also FTPS, SMTPS, POP3S, IMAPS and so on).

The beginning

The very first curl release didn’t have any TLS support, but already in June 1998 we shipped the first version that supported HTTPS. Back in those days the protocol was still really SSL. The library we used then was called SSLeay. (No, I never understood how that’s supposed to be pronounced)

The SSLeay library became OpenSSL very soon after but the API was brought along so curl supported it from the start.

More than one

In the spring of 2005 we merged the first support for building curl with a different TLS library. It was GnuTLS, which comes under a different license than OpenSSL and had a slightly different feature set. The race had began.

BearSSL

A short while ago and in time to get shipped in the coming 7.68.0 release (set to ship on January 8th 2020), the 14th TLS backend was merged into the curl source tree in the shape of support for BearSSL. BearSSL is a TLS library aimed at smaller devices and is perhaps lacking a bit in features (like no TLS 1.3 for example) but has still been requested by users in the past.

Multi-SSL

Since September 2017, you can even build libcurl to support one or more TLS libraries in the same build. When built that way, users can select which TLS backend curl should use at each start-up. A feature used and appreciated by for example git for Windows.

Time line

Below is an attempt to visualize how curl has grown in this area. Number of supported TLS backends over time, from the first curl release until today. The image comes from a slide I intend to use in a future curl presentation. A notable detail on this graph is the removal of axTLS support in late 2018 (removed in 7.63.0). PolarSSL is targeted to meet the same destiny in February 2020 since it gets no updates anymore and has in practice already been replaced by mbedTLS.

QUIC and TLS

If you’ve heard me talk about HTTP/3 (h3) and QUIC (like my talk at Full Stack Fest 2019), you already know that QUIC needs new APIs from the TLS libraries.

For h3 support to become reality in curl shipped in distros etc, the TLS library curl is set to use needs to provide a QUIC compatible API and the QUIC/h3 library curl uses then needs to support that.

It is likely that some TLS libraries are going to be fast with providing such APIs and some are going be (very) slow. Their particular individual abilities combined with the desire to ship curl with h3 support is likely going to affect what TLS library you will see used by curl in your distro will affect what TLS library you will build your own curl builds to use in the future.

Credits

The recently added BearSSL backend was written by Michael Forney. Top image by LEEROY Agency from Pixabay

https://daniel.haxx.se/blog/2019/12/11/bearssl-is-curls-14th-tls-backend/

|

|

Karl Dubost: Week notes - 2019 w49 - worklog - The Weak Notes |

A week with a bad cold makes it more difficult to write week notes. So here my weak notes. Everything seems heavier to type, to push.

This last week-end I was at JSConf JP. I wrote down some notes about it.

The week starts with two days of fulltime diagnosis (Monday, Tuesday). Let's get to it: 69 open bugs for Gecko. We try to distribute our work across the team so we are sure that at least someone is on duty for each day of the week. When we have finished our shift, we can add ourselves for more days. That doesn't prevent us for working on bugs the rest of the week. Some of the bugs take longer.

Webcompat bugs

Some of the weeks during diagnosis, a lot of bugs can't be reproduced. There's always a chance we are missing out some critical step to reproduce the issue. But without being able to reproduce, it is also very hard to diagnose. I end up sometimes closing them knowing that there might be a real bug, but that it would resurface again if it's a really common bug. Handling priorities, I guess.

- strange issue involving

overflow,flexandinputelement minimum size. - we see more and more bugs related to the async change for history navigation.

- yet another Fastclick issue. Just remove it from your projects.

- Certificates errors are on the rise on Firefox Nightly. The browsers vendors are planning to remove TLS 1.0 and 1.1 on March 2020. If your site is under https and an old version of TLS, update your certificate ASAP. if your site is under http, you are lucky. No troubles.

- Not very practical to compute your tax when the number disappears as soon as you enter in another form field.

- If you know someone working on the design of Yummly, please tell them about this bug.

- Issue with file uploader on Linkedin.

Python virtualenv required!

I didn't know about export PIP_REQUIRE_VIRTUALENV=true. This is quite cool.

After saving this change and sourcing the ~/.bashrc file with source ~/.bashrc, pip will no longer let you install packages if you are not in a virtual environment. If you try to use pip install outside of a virtual environment pip will gently remind you that an activated virtual environment is needed to install packages.

I never install globally for the last couple of years. If I really want to install something, I always use: pip install --user project_name, but I could probably go a tad further by doing the virtualenv requirements. It's easy.

mkdir pet_project cd pet_project python -m venv env source env/bin/activate

Thoughts

A complex system that works is invariably found to have evolved from a simple system that worked. A complex system designed from scratch never works and cannot be patched up to make it work. You have to start over with a working simple system. — Gall's law

- Working with a nasty cold makes it more difficult to take interesting notes.

- How to test Amazon S3 without an Amazon account? This is the thing bothering me with all these external services. There should be way to have dumb servers which are mocking the API responses without providing the actual service. In that way, people could figure out if they really want to use this service for their own code.

Otsukare!

|

|

Nicholas Nethercote: How to speed up the Rust compiler one last time in 2019 |

I last wrote in October about my work on speeding up the Rust compiler. With the year’s end approaching, it’s time for an update.

Doc comments

#65750: Normal comments are stripped out during parsing and not represented in the AST. But doc comments have semantic meaning (`/// blah` is equivalent to the attribute #[doc="blah"]) and so must be represented explicitly in the AST. Furthermore:

- In a multi-line doc comment using

///or//!comment markers every separate line is treated as a separate attribute; - Multi-line doc comments using

///and//!markers are very common, much more so than those using/** */or/*! */markers, particularly in the standard library where doc comment lines often outnumber code lines; - The AST representation of attributes is quite expensive, partly because it retains the original tokens for complex reasons involving procedural macros.

As a result, doc comments had a surprisingly high cost. This PR introduced a special, cheaper representation for doc comments, giving wins on many benchmarks, some in excess of 10%.

Token streams

#65455: This PR avoided some unnecessary conversions from TokenTree type to the closely related TokenStream type, avoiding allocations and giving wins on many benchmarks of up to 5%. It included one of the most satisfying commits I’ve made to rustc.

Up to 5% wins by changing only three lines. But what intricate lines they are! There is a lot going on, and they’re probably incomprehensible to anyone not deeply familiar with the relevant types. My eyes used to just bounce off those lines. As is typical for this kind of change, the commit message is substantially longer than the commit itself.

It’s satisfying partly because it shows my knowledge of Rust has improved, particularly Into and iterators. (One thing I worked out along the way: collect is just another name for FromIterator::from_iter.) Having said that, now that a couple of months have passed, it still takes me effort to remember how those lines work.

But even more so, it’s satisfying because it wouldn’t have happened had I not repeatedly simplified the types involved over the past year. They used to look like this:

pub struct TokenStream { kind: TokenStreamKind };

pub enum TokenStreamKind {

Empty,

Tree(TokenTree),

JointTree(TokenTree),

Stream(RcVec),

}

(Note the recursion!)

After multiple simplifying PRs (#56737, #57004, #57486, #58476, #65261), they now look like this:

pub struct TokenStream(pub Lrc>);

pub type TreeAndJoint = (TokenTree, IsJoint);

pub enum IsJoint { Joint, NonJoint }

(Note the lack of recursion!)

I didn’t set out to simplify the type for speed, I just found it ugly and confusing. But now it’s simple enough that understanding and optimizing intricate uses is much easier. When those three lines appeared in a profile, I could finally understand them. Refactoring FTW!

(A third reason for satisfaction: I found the inefficiency using the improved DHAT I worked on earlier this year. Hooray for nice tools!)

#65641: This was a nice follow-on from the previous PR. TokenStream had custom impls of the RustcEncodable/RustcDecodable traits. These were necessary when TokenStream was more complicated, but now that it’s much simpler the derived impls suffice. As well as simplifying the code, the PR won up to 3% on some benchmarks because the custom impls created some now-unnecessary intermediate structures.

#65198: David Tolnay reported that basic concatenation of tokens, as done by many procedural macros, could be exceedingly slow, and that operating directly on strings could be 100x faster. This PR removed quadratic behaviour in two places, both of which duplicated a token vector when appending a new token. (Raise a glass to the excellent Rc::make_mut function, which I used in both cases.) The PR gave some very small (< 1%) speed-ups on the standard benchmarks but sped up a microbenchmark that exhibited the problem by over 1000x, and made it practical for procedural benchmarks to use tokens. I also added David’s microbenchmark to rustc-perf to avoid future regressions.

unicode_normalization

#65260: This PR added a special-case check to skip an expensive function call in a hot function, giving a 7% win on the unicode_normalization benchmark.

#65480: LexicalResolver::iterate_until_fixed_point() was a hot function that iterated over some constraints, gradually removing them until none remain. The constraints were stored in a SmallVec and retain was used to remove the elements. This PR (a) changed the function so it stored the constraints in an immutable Vec and then used a BitSet to record which which constraints were still live, and (b) inlined the function at its only call site. These changes won another 7% on unicode_normalization, but only after I had sped up BitSet iteration with some micro-optimizations in #65425.

#66537: This PR moved an expensive function call after a test and early return that is almost always taken, giving a 2% win on unicode_normalization.

Miscellanous

#66408: The ObligationForest type has a vector of obligations that it processes in a fixpoint manner. Each fixpoint iteration involves running an operation on every obligation, which can cause new obligations to be appended to the vector. Previously, newly-added obligations would not be considered until the next fixpoint iteration. This PR changed the code so it would consider those new obligations in the iteration in which they were added, reducing the number of iterations required to reach a fixpoint, and winning up to 8% on a few benchmarks.

#66013: The Rust compiler is designed around a demand-driven query system. Query execution is memoized: when a query is first invoked the compiler will perform the computation and store the result in a hash table, and on subsequent invocations the compiler will return the result from the hash table. For the parallel configuration of rustc this hash table experiences a lot of contention, and so it is sharded; each query lookup has two parts, one to get the shard, and one within the shard. This PR changed things so that the key was only hashed once and the hash value reused for both parts of the lookup, winning up to 3% on the single-threaded configuration of parallel compiler. (It had no benefit for the non-parallel compiler, which is what currently ships, because it does not use sharding.)

#66012: This PR removed the trivial_dropck_outlives query from the query system because the underlying computation is so simple that it is faster to redo it whenever necessary than to look up the result in the query hash table. This won up to 1% on some benchmarks, and possibly more for the parallel compiler, due to the abovementioned contention over the query hash table.

#65463: The function expand_pattern() caused many arena allocations where a 4 KiB arena chunk was allocated that only ever held a single small and short-lived allocation. This PR avoided these, reducing the number of bytes allocated by up to 2% for various benchmarks, though the effect on runtime was minimal.

#66540 – This PR changed a Vec to a SmallVec in Candidate::match_pairs, reducing allocation rates for a tiny speed win.

symbols

The compiler has an interned string type called Symbol that is widely used to represent identifiers and other strings. The compiler also had two variants of that type, confusingly called InternedString and LocalInternedString. In a series of PRs (#65426, #65545, #65657, #65776) I removed InternedString and minimized the functionality and uses of LocalInternedString (and also renamed it as SymbolStr). This was made possible by Matthew Jasper’s work eliminating gensyms. The changes won up to 1% on various benchmarks, but the real benefit was code simplicity, as issue #60869 described. Like the TokenStream type I mentioned above, these types had annoyed me for some time, so it was good to get them into a nicer state.

Progress

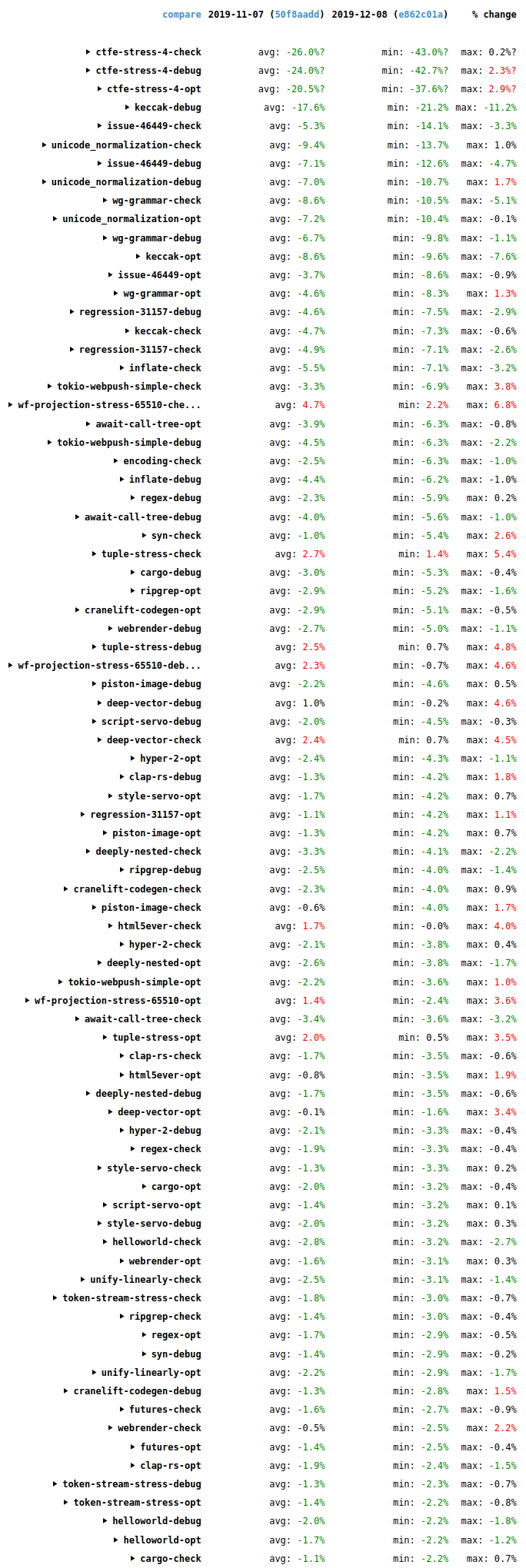

The storage of historical benchmark data changed recently, which (as far as I can tell) makes comparisons to versions earlier than early November difficult. Nonetheless, wall-time results for the past month (2019-11-07 to 2019-12-08) cover the second half of that period, and they are generally good, as the following screenshot shows.

Overall, lots of improvements (green), some in the double digits, and only a small number of regressions (red). It’s a good trend, and it’s not just due to the PRs mentioned above. For example, MIR constant propagation won 2-10% in a lot of cases. It duplicates an optimization that LLVM also does, but performs it earlier in the pipeline when there’s less code. This saves time because it makes LLVM’s job easier, and it also helps the code quality of debug builds where the LLVM constant propagation isn’t run.

Final words

All of the above improvements (and most of the ones in my previous posts) I found by profiling with Cachegrind, Callgrind, DHAT, and counts, but there are plenty of other profilers out there. I encourage anyone who has interest and even slight familiarity with profiling (or a willingness to learn) to give it a try. The Rust compiler is an easy target, profiling-wise, because it’s a batch program that is mostly single-threaded and almost deterministic. (Compare it to Firefox, for example, which is interactive, multi-threaded, and highly non-deterministic.) Give it a go!

|

|

Mozilla VR Blog: ECSY Developer tools extension |

Introduction

Two months ago we released ECSY, a framework-agnostic Entity Component System library you could use to build real time applications with the engine of your choice.

Today we are happy to announce a developer tools extension for ECSY, aiming to help you better understand what it is going on in your application when using ECSY.

A common requirement when building applications that require high performance- such as real time 3D graphics, AR and VR experiences- is the need to understand which part of our application is consuming more resources. We could always use the browsers’ profilers to try to understand our bottlenecks but they can be a bit unintuitive to use, and it is hard to get an overview of what is going on in the entire application, rather than focusing on a specific piece of your code.

With the ECSY developer tools extension you can now see that overview, getting statistics in realtime on how your systems are performing. Once you know which system is causing troubles in performance you can then use the browser profiler to dig into the underlying issue.

Apart from showing the status of the application, the extension lets you control the execution (eg: play/pause, step system, step frame) for debugging or learning purposes, as well as accessing the value of the queries and components used in your application.

How do I use it?

- Install the extension from the extension store for your browser (Firefox, Chrome)

- Launch any ECSY application (For example https://ecsy.io/examples/circles-boxes/index.html). You will notice that the “ECSY” icon on the toolbar changes its color indicating that the ECSY library is detected:

- Open the “ECSY” tab in the browser’s developer tool (Firefox, Chrome) and you will see the state of the application.

Panel overview

When the ECSY extension panel is loaded, you will see all the sections available on the UI. (A big shout out to the flecs team as their work on their admin panel was a big inspiration for us when designing the UI)

- (1) Main toolbar: You can control options that affect all the main panels. The buttons in order are:

- Entities (E), Components (C), Queries (Q) and Systems (S): the buttons will toggle the visibility for each of the main panels.

- Show relationship between components: When enabled it will highlight the relationships between Components, Queries and Systems. Eg: If you hover the

Rotatingcomponent, it will highlight theRotatingquery as it is using that component, as well as theRotatingSystem. - Show debug: Show the JSON with all the stats and info collected from the ECSY application used by the extension.

- Show remote console (If debugging a remote device): It lets you enter commands to be executed on the remote device.

- Show Graphs: Toggle the graphs on all the sections.

- Show Stats: Toggle detailed stats on all the sections. This includes the following computed values for each entry:

- Minimum

- Maximum

- Mean

- Standard deviation

- (2) Version info: Shows the version info as well as the connection details if connected to a remote device.

- (3) Entities: The number of entities created.

- (4) Components: Shows the number of component classes and their instances in the application.

- This section also includes a toolbar similar to the main one:

- Show graphs and stats: For all the components

- Link minimum and maximum values: It will synchronize all the graphs with the maximum and minimum globals values.

- Show pool size: It will include on each component’s graph another time series with the size of the pool for that component in red color.

- Show detailed stats: minimum, maximum, average and standard deviation.

- Then each component you can see:

- Tag components: They will include a special icon to easily spot them on the list.

- Pool detection:

- If the component is not using a pool, it will show a warning icon indicating that it is recommended to define them as poolable for performance reasons.

- Every time the application is creating a new component, you will see a flash message indicating if it has no pool, or the pool has no empty elements on it.

- Dump to console: Each component has an arrow icon that will dump the list of instances of that component to the console. That list will also be assigned to a variable called

$componentso you can use it on the console.

- This section also includes a toolbar similar to the main one:

- (5) Queries: Similar to the components section, here you also have a toolbar to toggle the graph and stats visibility or toggle the graphs’ minimum and maximum values synchronization.

For each query you can see the components that take part in its definition and the number of entities the query has. - (6) Systems: In this section you can explore all the systems being executed in your application loop and the timing for all of them.

Each system shows the number of milliseconds it took to execute in the last frame, and the percentage of that time related to the whole frame time. For each query on the system you will also get extra stats if they are “reactive” (link ecsy), indicating the size of the queues for each event (added, removed or changed).

We can control the execution for debugging or learning purposes:- World: Applied to all systems:

- Play/Stop: Play or stop all the systems

- Step one frame: Execute all the systems one time

- Step next system: Execute the next system in order. Just one system at a time.

- System

- Play/Stop: Play or stop the specific system

- Step: Execute the system one time.

- Solo: Pauses all the other systems but this one. Clicking on it again will undo the action.

- World: Applied to all systems:

Remote connection

When debugging applications sometimes we want to test our code on an external device like a mobile phone or a standalone VR headset. The problem is that custom WebExtensions are not available when connecting to remote devices from the browser’s developer tools.

To address this issue we implemented a custom remote protocol using WebSockets and WebRTC, so you can connect to your device even if it is not connected via USB to your laptop, or using the same browser as you are using for debugging (eg: you could use Chrome to connect to a Firefox Reality browser on a VR device or Firefox to connect to an Oculus Browser sessions).

How to enable the developer tools remote connection in an ECSY application?

Today we also release a new version of ECSY (v.0.2) that includes support for using the developer tools with remote devices.

Remote connection can be enabled using two methods:

-

Explicitly calling

enableRemoteDevtools()on your application.import {enableRemoteDevtools} from “ecsy”; enableRemoteDevtools( /* optional Code */);Please notice that you can pass an optional parameter as the code that the application will be listening for. Otherwise, a new one will be generated:

-

Adding

?enable-remote-devtoolsto the URL when running your application on the browser you want to connect to.

Using any of the previous methods will add an overlay on your application showing a random code that will be used to connect to remotely.

How to connect a remote device?

Once we get the code from the previous section, we can connect to that device from our preferred browser by clicking the ECSY icon on the extensions toolbar, entering the code and clicking connect:

Or doing the same from the ECSY panel in the developer tools.

Features on remote devices

When using the developer tools on a remote device there are some differences from running it locally.

Console.log and errors hooks

We hook every call to console.* as well as any error thrown by the application running on the remote device, we will send them back to the host developer tools browser and log them on the console.

Dump to console

When connected to a remote device you still have the dump to console functionality to inspect components and queries, but it works slightly differently: The remote device will serialize the data and send it to the host developer tools that will deserialize it again and call console.log with that.

Note that currently the deserialization process is quite simple, so we are just preserving the raw data, but we lose all functions and data type definitions.

Remote console

We also included the ability to execute code on the remote device. Clicking on the remote console icon, the following panel will appear:

Everything you enter on the textbox will get executed on the remote device and it will write the result of the evaluation on the list above.

What’s next?

Currently, the extension is still experimental and we still have to fix issues on the current implementation (eg: right now there is a lot of re-render going on on the react UI making it slow on applications with many components and systems), and many other cool features we would like to include on the incoming version.

We would love your feedback! Please join us on Discourse or Github to discuss what you do think and share your needs.

|

|

Mozilla Privacy Blog: India’s new data protection bill: Strong on companies, step backward on government surveillance |

Yesterday, the Government of India shared a near final draft of its data protection law with Members of Parliament, after more than a decade of engagement from industry and civil society. This is a significant milestone for a country with the second largest population on the internet and where privacy was declared a fundamental right by its Supreme Court back in 2017.

Like the previous version of the bill from July 2018 developed by the Justice Srikrishna Committee, this bill offers strong protections in regards to data processing by companies. Critically, this latest bill is a dramatic step backward in terms of the exceptions it grants for government processing and surveillance.

The original draft, which we called groundbreaking in many respects, contained some concerning issues: glaring exceptions for the government use of data, data localisation, an insufficiently independent data protection authority, and the absence of a right to deletion and objection to processing. While this new bill makes progress on some issues like data localisation, it also introduces new threats to privacy such as user verification for social media companies and forced transfers of non-personal data.

As the bill is introduced and reviewed in Parliament, attention and action is needed on several provisions. Here are some highlights:

- Exceptions for Law Enforcement and other government use: The biggest concern in the new draft is the bill’s expansion of the broad exceptions that were present in the 2018 draft of the data protection bill for the government processing of data. Crucially, the requirement that government processing of data be “necessary and proportionate” has been cut. Furthermore, a provision was added granting the government complete discretion to exempt any entity or department from any part of the law. This leaves the current legal vacuum around India’s surveillance and intelligence services intact, which is fundamentally incompatible with effective privacy protection.

- Independence of the Data Protection Authority: The new law further reduces the powers and independence of the data protection authority (DPA) by significantly weakening the commission that will appoint the Chairperson and members of the DPA. Where the 2018 draft said that they were to be appointed by a diverse committee with executive, judicial, and external expertise, the new law limits this committee to members of the executive. As with the last bill, Adjudicating Officers are also appointed by the government. Together, this will make it much harder for the DPA to be empowered and effective as the entire governing structure will be appointed exclusively by the government.

- Social Media User Verification: In a move that will be disastrous for the privacy and anonymity of internet users, the law contains a provision requiring companies to provide the option for users to voluntarily verify their identities. This would likely entail users sending photos of government issued IDs to the companies. There are also reports that intermediaries will have to report accounts that do not verify themselves using such procedures to the government, which could make them a target for government scrutiny and investigation. This provision will incentivise the collection of sensitive personal data from government IDs that are submitted for this verification, which can then be used to profile and target users. This is not hypothetical conjecture – we have already seen phone numbers collected for security purposes being used for profiling. This provision will also increase the risk from data breaches and entrench power in the hands of large players in the social media space who can afford to build and maintain such verification systems. There is no evidence to prove that this measure will help fight misinformation (its motivating factor), and it ignores the benefits that anonymity can bring to the internet, such as whistleblowing and protection from stalkers.

- Forced Transfer of Non-Personal Data: The law also mandates that certain companies can be forced to transfer non-personal data to the government for public good and policy planning purposes. Not only can non-personal data constitute protected trade secrets and the insights derived from such data be protected by intellectual property law, but turning over this information to the government also raises significant privacy concerns. Information about sales location data from e-commerce platforms, for example, can be used to draw dangerous inferences and patterns regarding caste, religion, and sexuality. The law should continue to focus on the protection of personal data and leave the regulation of non-personal data to an independent law.

- Ambiguity in Implementation: The 2018 draft clearly laid out the timelines for the creation of the data protection authority, the accompanying subsidiary legislation, and the date in which the law would finally be enforceable. The new law removes all references to this timeline and merely mentions that the Central Government may notify the enforcement of the law at its complete discretion, creating ambiguity and uncertainty in the ecosystem.

On a positive note:

- Data Localisation and Cross Border Transfers: In a positive move compared to the 2018 draft, the law relaxes data localisation restrictions and applies them to only sensitive and critical personal data (i.e., personal data can be transferred without restriction). For sensitive data, the data can be processed outside the country and there are also reciprocity based exceptions that allows even critical and sensitive data to be processed outside the country. However, sensitive data must be stored in India, and it continues to be hard to see this as anything other than an effort to make surveillance easier.

- Right to Erasure: In a positive move, the new law includes an explicit right to erasure along with the right to correction, which gives data principles the right to demand that fiduciaries delete data which is no longer necessary for the purpose for which it was originally processed.

- Strong obligations on companies and rights for individuals: Overall, the bill retains the strong protections in regards to processing by companies that existed in the 2018 draft. In particular, there are strong provisions on consent, authorized basis for processing, purpose limitation, collection limitation, notice, data retention, data quality, data security safeguards, right to access, right to correction, data portability, and enhanced obligations for significant data fiduciaries.

Overall, while there are several strong provisions, significant concerns remain with the law and the Parliament will be critical in ensuring that Indians receive the data protection law they deserve. Mozilla will continue to engage with the Parliament, the Government of India, and other stakeholders over the coming months to help make this happen.

The post India’s new data protection bill: Strong on companies, step backward on government surveillance appeared first on Open Policy & Advocacy.

|

|

Hacks.Mozilla.Org: Debugging Variables With Watchpoints in Firefox 72 |

The Firefox Devtools team, along with our community of code contributors, have been working hard to pack Firefox 72 full of improvements. This post introduces the watchpoints feature that’s available right now in Firefox Developer Edition! Keep reading to get up to speed on watchpoints and how to use them.

What are watchpoints and why are they useful?

Have you ever wanted to know where properties on objects are read or set in your code, without having to manually add breakpoints or log statements? Watchpoints are a type of breakpoint that provide an answer to that question.

If you add a watchpoint to a property on an object, every time the property is used, the debugger will pause at that location. There are two types of watchpoints: get and set. The get watchpoint pauses whenever a property is read, and the set watchpoint pauses whenever a property value changes.

The watchpoint feature is particularly useful when you are debugging large, complex codebases. In this type of environment, it may not be straightforward to predict where a property is being set/read.

Watchpoints are also available in Firefox’s Visual Studio Code Extension where they’re referred to as “data breakpoints.” You can download the Debugger for Firefox extension from the VSCode Marketplace. Then, read more about how to use VSCode’s data breakpoints in VSCode’s debugging documentation.

Getting Started

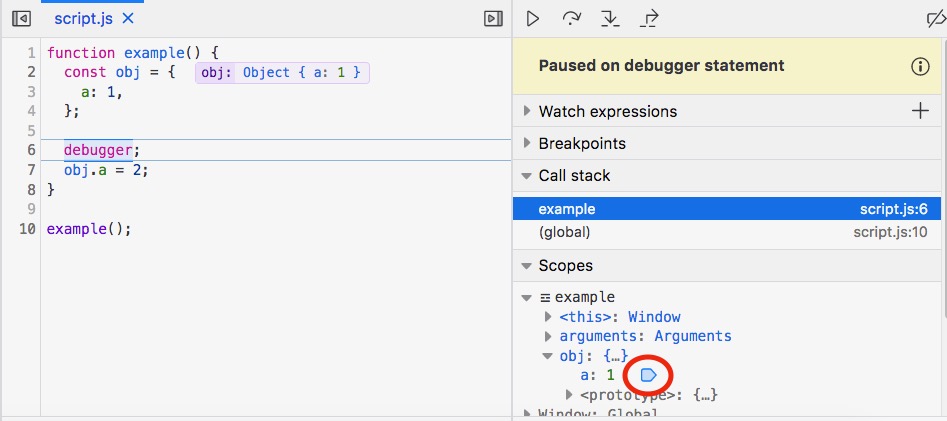

To set a watchpoint, pause the debugger, find a property in the Debugger’s ‘Scopes’ pane, and right-click it to view the menu. Once the menu is displayed, you can choose to add a set or get watchpoint. Here we want to debug obj.a, so we will add a set watchpoint on it.

Voila, the set watchpoint has been added, indicated by the blue watchpoint icon to the right of the property. Here comes the easy part in your code — where you let the debugger inform you when properties are set. Just hit resume (or F8), and we’re off.

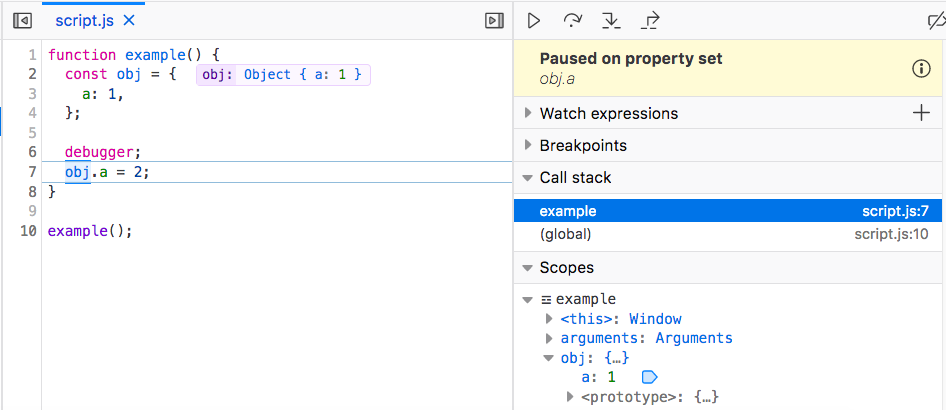

The debugger has paused on line 7 where obj.a is set. Also notice the yellow pause message panel in the upper right corner which tells us that we are breaking because of a set watchpoint.

Deleting a watchpoint is like deleting a regular breakpoint—just click the blue watchpoint icon.

And that’s it! This feature is simple to use, but it’s powerful to have in your debugging toolbox.

Implementation

When you add a watchpoint to a property, getter and setter functions are defined for the property using JavaScript’s native Object.defineProperty method. These getter/setter functions run every time your property is used, and they call a function that pauses the debugger. You can check out the server code for this feature.

When we built the implementation of watchpoints, we faced an interesting challenge. The team needed to be sure that our use of Object.defineProperty would be transparent to the user. For this reason, we had to make sure that original values rather than getter/setter functions appeared in the debugger.

Some things to keep in mind:

-Watchpoints do not work for getters and setters.

-When a variable is garbage-collected, it takes the watchpoint with it.

What’s Next

We plan to support adding and viewing watchpoints from the console and in the many other places where DevTools lets you inspect objects. Also, we want to continue polishing this feature, and that’s where we’d love to have your help!

Give watchpoints a spin in Firefox Developer Edition 72, and please send us feedback in one of these channels:

- File bug reports in Bugzilla.

- Join us on the Firefox Devtools Slack to share your input.

- Discuss your ideas in Mozilla’s Developer Tools Discourse.

- Tweet to us at @FirefoxDevTools

The post Debugging Variables With Watchpoints in Firefox 72 appeared first on Mozilla Hacks - the Web developer blog.

https://hacks.mozilla.org/2019/12/debugging-variables-with-watchpoints-in-firefox-72/

|

|

Marco Zehe: mailbox.org is giving new customers €6 until Jan 10, 2020 |

My personal favorite e-mail provider mailbox.org is giving away Christmas vouchers until January 10, 2020, for each new customer registration. Details if you read on.

Mailbox.org is an e-mail and calendaring/office suite provider based in Germany. They are very privacy focused, run on renewable energy exclusively, and are committed to using Open Source wherever possible. They are promoters of open standards such as IMAP and SMTP, CalDav, CardDav and WebDav for their offerings. They use Open-Xchange as their software of choice. Their service offers e-mail, calendar, contacts, tasks, file storage, word processing, spreadsheet and presentation software as well as an integrated PGP module for secure encrypted e-mails.

This year, from now until January 10, 2020, each new customer can get a voucher of 6 Euros upon registration and using the voucher code “christmas2019”. All details and conditions in the linked blog post.

The Open-Xchange web front-end is very accessible in many parts, and more stuff is added frequently with each release. I use it for my personal e-mail, and am really liking it. You can also use any compatible IMAP/SMTP mail client, the open standards integrate extremely well with iOS and MacOS.

Disclaimer: I am not getting any money from this promotion. I pass this on because I like their service a lot and can only recommend it to others. And this seems like a pretty good incentive for trying it for a bit. Enjoy!

https://marcozehe.de/2019/12/10/mailbox-org-is-giving-new-customers-e6-until-jan-10-2020/

|

|

Daniel Stenberg: Mr Robot curls |

The Mr Robot TV series features a security expert and hacker lead character, Elliot.

Season 4, episode 8

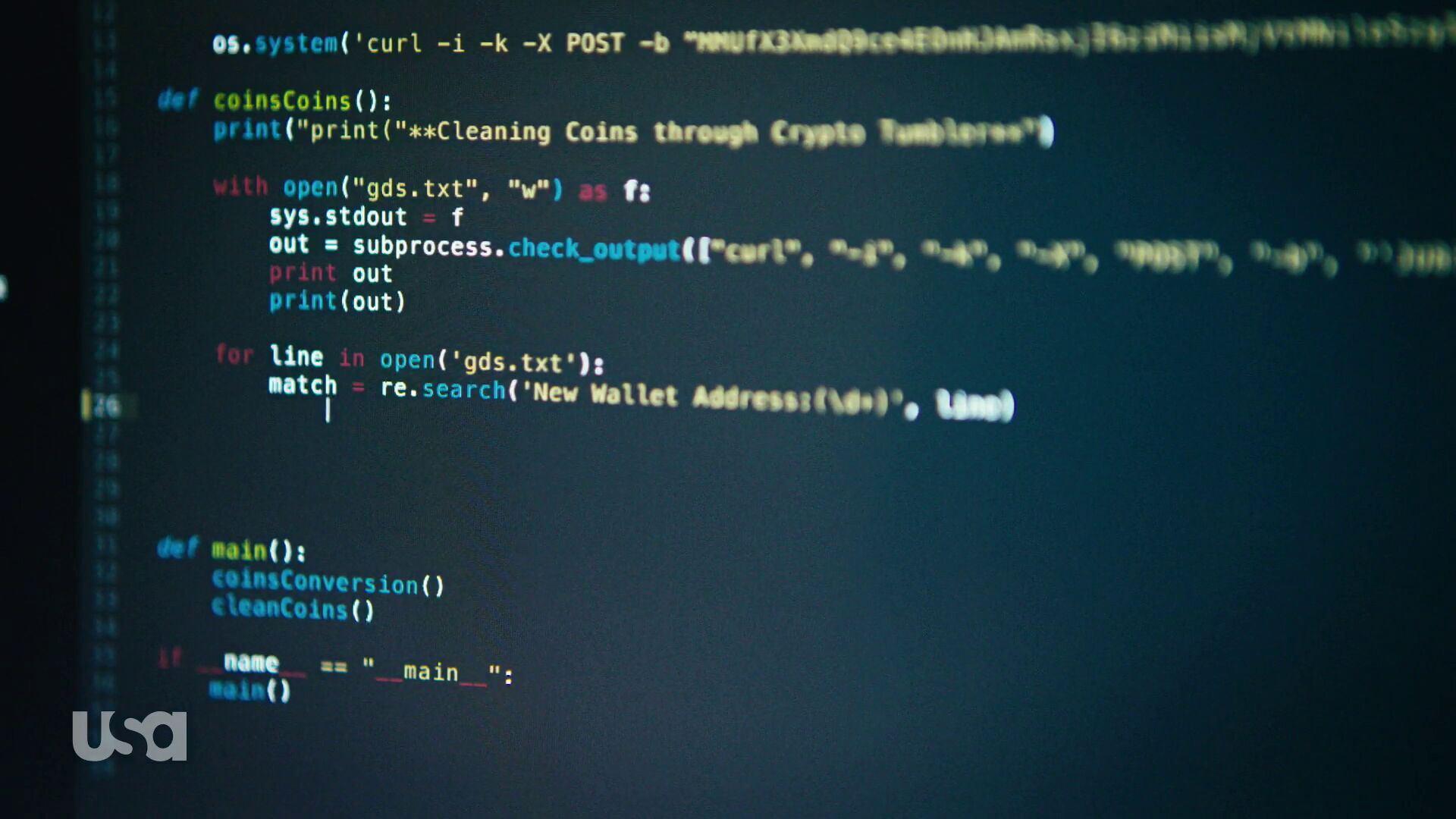

Vasilis Lourdas reported that he did a “curl sighting” in the show and very well I took a closer peek and what do we see some 37 minutes 36 seconds into episode 8 season 4…

(I haven’t followed the show since at some point in season two so I cannot speak for what actually has happened in the plot up to this point. I’m only looking at and talking about what’s on the screenshots here.)

Elliot writes Python. In this Python program, we can see two curl invokes, both unfortunately a blurry on the right side so it’s hard to see them exactly (the blur is really there in the source and I couldn’t see/catch a single frame without it). Fortunately, I think we get some additional clues later on in episode 10, see below.

He invokes curl with -i to see the response header coming back but then he makes some questionable choices. The -k option is the short version of --insecure. It truly makes a HTTPS connection insecure since it completely switches off the CA cert verification. We all know no serious hacker would do that in a real world use.

Perhaps the biggest problem for me is however the following -X POST. In itself it doesn’t have to be bad, but when taking the second shot from episode 10 into account we see that he really does combine this with the use of -d and thus the -X is totally superfluous or perhaps even wrong. The show technician who wrote this copied a bad example…

The -b that follows is fun. That sets a specific cookie to be sent in the outgoing HTTP request. The random look of this cookie makes it smell like a session cookie of some sorts, which of course you’d rarely then hard-code it like this in a script and expect it to be of use at a later point. (Details unfold later.)

Season 4, episode 10

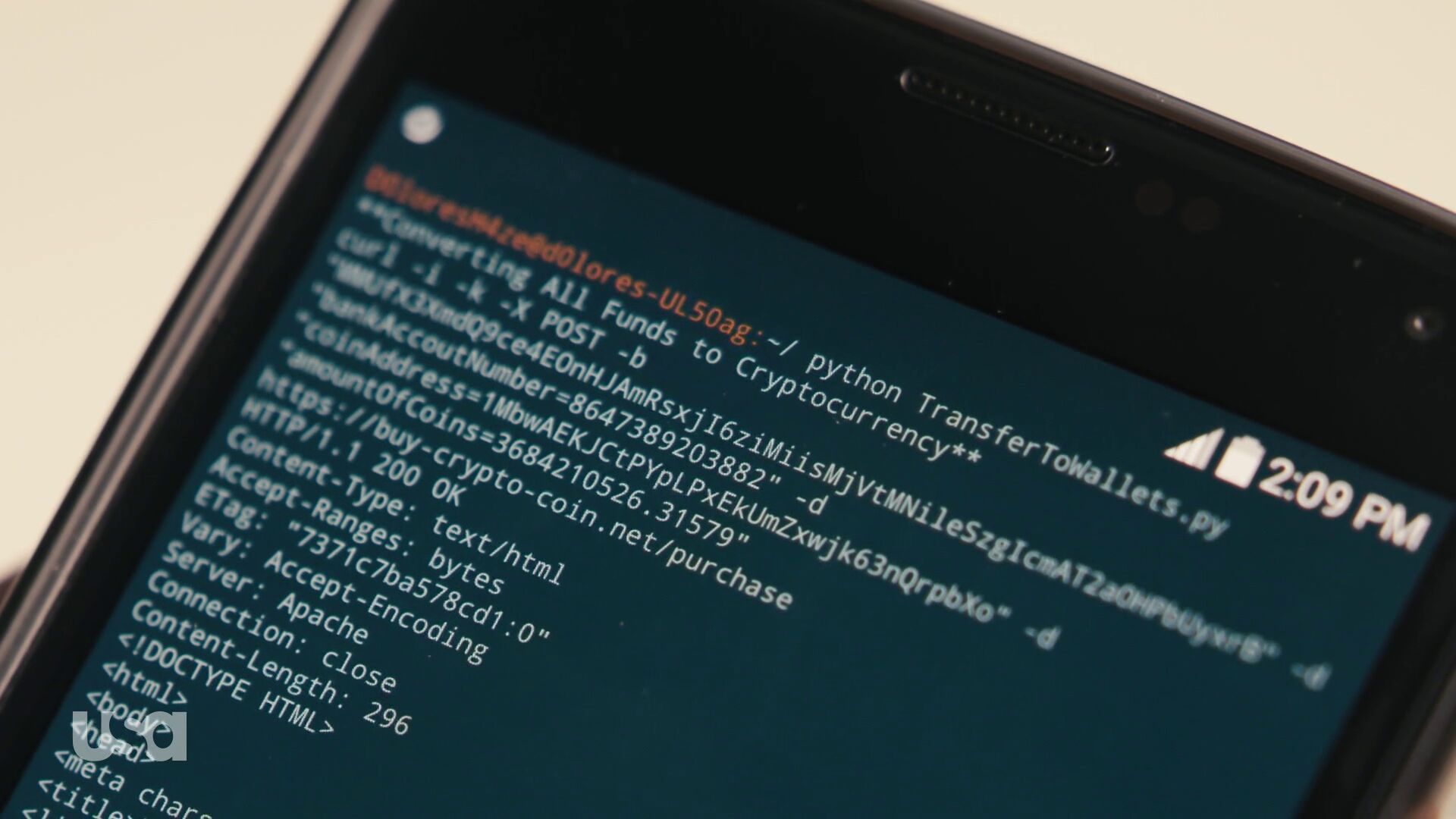

Lucas Pardue followed-up with this second sighting of curl from episode 10, at about 23:24. It appears that this might be the python program from episode 8 that is now made to run on or at least with a mobile phone. I would guess this is a session logged in somewhere else.

In this shot we can see the command line again from episode 8.

We learn something more here. The -b option didn’t specify a cookie – because there’s no = anywhere in the argument following. It actually specified a file name. Not sure that makes anything more sensible, because it seems weird to purposely use such a long and random-looking filename to store cookies in…

Here we also see that in this POST request it passes on a bank account number, a “coin address” and amountOfCoins=3684210526.31579 to this URL: https://buy-crypto-coin.net/purchase, and it gets 200 OK back from a HTTP/1.1 server.

I tried it

curl -i -k -X POST -d bankAccountNumber=8647389203882 -d coinAddress=1MbwAEKJCtPYpLPxEkUmZxwjk63nQrpbXo -d amountOfCoins=3684210526.31579 https://buy-crypto-coin.net/purchase

I don’t have the cookie file so it can’t be repeated completely. What did I learn?

First: OpenSSL 1.1.1 doesn’t even want to establish a TLS connection against this host and says dh key too small. So in order to continue this game I took to a curl built with a TLS library that didn’t complain on this silly server.

Next: I learned that the server responding on this address (because there truly is a HTTPS server there) doesn’t have this host name in its certificate so -k is truly required to make curl speak to this host!

Then finally it didn’t actually do anything fun that I could notice. How boring. It just responded with a 301 and Location: http://www.buy-crypto-coin.net. Notice how it redirects away from HTTPS.

What’s on that site? A rather good-looking fake cryptocurrency market site. The links at the bottom all go to various NBC Universal and USA Network URLs, which I presume are the companies behind the TV series. I saved a screenshot below just in case it changes.

|

|

Mozilla Privacy Blog: Trusted Recursive Resolvers – Protecting Your Privacy with Policy and Technology |

In keeping with a longstanding commitment to privacy and online security, this year Mozilla has launched products and features that ensure privacy is respected and is the default. We recognize that technology alone isn’t enough to protect your privacy. To build a product that truly protects people, you need strong data policies.

An example of our work here is the U.S. deployment of DNS over HTTPS (DoH), a new protocol to keep people’s browsing activity safe from being intercepted or tampered with, and our Trusted Recursive Resolver program (TRR). Connecting the right technology with strict operational requirements will make it harder for malicious actors to spy on or tamper with users’ browsing activity, and will protect users from DNS providers, including internet service providers (ISPs), that can abuse their data.

DoH’s ability to encrypt DNS data addresses only half the problem we are trying to solve. The second half is requiring that companies with the ability to see and store your browsing history change their data handling practices. This is what the TRR program is for. With these two initiatives, we’re helping close data leaks that have been part of the Internet since the DNS was created 35 years ago.

Our TRR program aims to standardize requirements in three areas: limiting data collection and retention, ensuring transparency for any data retention that does occur, and limiting blocking or content modification. For any company Mozilla partners with, our expectation is that they respect modern standards for privacy and security for our users. Specifically:

- Limiting data. Your DNS data can reveal a lot of sensitive information about you, and currently DNS providers aren’t subject to any limits on what they can do with that data; we want to change that. Our policy requires that your data will only be used for the purpose of operating the service, must not be retained for longer than 24 hours, and cannot be sold, shared, or licensed to other parties.

- Transparency. It isn’t enough that our partners tell Mozilla privately about their data retention and use policies. What is more important is that they attest to those good policies publicly and that they make a commitment users can see and understand about how their data is handled. That is why our policy requires resolvers to publish a public privacy notice that documents what data is retained and how it is used.

- Blocking & Modification. DNS can be used to control what information you are allowed to see. DNS providers can potentially censor your browsing activity, give you the wrong results, or surface their own content. We think you should be the one deciding what information you need, not your DNS provider. Our requirements prevent resolvers, from blocking, filtering, modifying, or providing inaccurate responses, unless they are strictly required by law to do so. Alternatively, we are supporting operation of filtering when a user explicitly opts-in, as with parental controls.

These policy requirements are a critical part of our strategy to put people back in control of their data and privacy online. And we look forward to bringing more partners into our TRR program who are willing to put people over profit. Our hope is that the rest of the industry follows suit and helps us bring DNS into the 21st century with the privacy and security protections that people deserve.

The post Trusted Recursive Resolvers – Protecting Your Privacy with Policy and Technology appeared first on Open Policy & Advocacy.

|

|

Mozilla Privacy Blog: Mozilla comments on CCPA regulations |

Around the globe, Mozilla has been a supporter of data privacy laws that empower people – including the California Consumer Protection Act (CCPA). For the last few weeks, we’ve been considering the draft regulations, released in October, from Attorney General Becerra. Today, we submitted comments to help California effectively and meaningfully implement CCPA.

We all know that people deserve more control over their online data. And we take care to provide people protection and control by baking privacy and the same principles we want to see in legislation into the Firefox browser.

In our comments, we discuss three important provisions:

- The definition of a third party: Usually, third party interactions are defined by the context of the data collection – not whether or not the party has a direct relationship with the user. More and more, we see companies collect data from a number of contexts: first parties, as a third party on a different site, or simply buying data directly from a data broker or reseller. These definitions should be clear that data is not regulated based solely on how the entity is categorized – but rather about the context in which the data was obtained.

- The potential for fraud with data requests coming through authorized agents: We’re encouraged by authentication requirements, but concerned that a set of unauthorized agents may blanket companies with fraudulent requests .The opportunity for fraud and abuse is high particularly if the business responding to such a request does not have a meaningful opportunity to pursue their own authentication other than asking the authorized agent for proof of such authorization. Additional guidance on companies’ obligations to respond to third party agents would be helpful as companies try to balance security with responsiveness to access requests.

- Metrics reporting: The regulations outline a series of public facing metrics companies must release about access requests. the specific reporting breakdowns required (do not significantly increase the understanding of how CCPA rights are being exercised and complied with. Companies like Mozilla that extend the same personal data rights to any person and cannot determine that individual’s location, will have difficulty complying with the metrics reporting as outlined in the draft regulations. We do not want to ask users who send us data access requests for additional personal information in order to comply with a metrics standard.

We look forward to continuing to work with the California Attorney General’s office to help protect the data of Californians – and we will keep working across jurisdictions to enact privacy and data protection laws across the globe.

While we will all have to see how implementation and enforcement roll out, we continue to be very encouraged to see California acting where the U.S. Congress has not (although we were also happy to see several frameworks released in advance of this week’s hearing). There are many shared elements between these laws, regulations, and drafts and the privacy blueprint we released earlier this year.

The post Mozilla comments on CCPA regulations appeared first on Open Policy & Advocacy.

https://blog.mozilla.org/netpolicy/2019/12/09/mozilla-comments-on-ccpa-regulations/

|

|

Mozilla Addons Blog: Secure your addons.mozilla.org account with two-factor authentication |

Accounts on addons.mozilla.org (AMO) are integrated with Firefox Accounts, which lets you manage multiple Mozilla services from one login. To prevent unauthorized people from accessing your account, even if they obtain your password, we strongly recommend that you enable two-factor authentication (2FA). 2FA adds an extra layer of security to your account by adding an additional step to the login process to prove you are who you say you are.

When logging in with 2FA enabled, you will be asked to provide a verification code from an authentication application, in addition to your user name and password. This article on support.mozilla.org includes a list of supported authenticator applications.

Starting in early 2020, extension developers will be required to have 2FA enabled on AMO. This is intended to help prevent malicious actors from taking control of legitimate add-ons and their users. 2FA will not be required for submissions that use AMO’s upload API.

Before this requirement goes into effect, we’ll be working closely with the Firefox Accounts team to make sure the 2FA setup and login experience on AMO is as smooth as possible. Once this requirement goes into effect, developers will be prompted to enable 2FA when making changes to their add-ons.

You can enable 2FA for your account before the requirement goes into effect by following these instructions from support.mozilla.org.

Once you’ve finished the set-up process, be sure to download or print your recovery codes and keep them in a safe place. If you ever lose access to your 2FA devices and get locked out of your account, you will need to provide one of your recovery codes to regain access. Misplacing these codes can lead to permanent loss of access to your account and your extensions on AMO.

The post Secure your addons.mozilla.org account with two-factor authentication appeared first on Mozilla Add-ons Blog.

|

|

Daniel Stenberg: This is your wake up curl |

curl_multi_wakeup() is a new friend in the libcurl API family. It will show up for the first time in the upcoming 7.68.0 release, scheduled to happen on January 8th 2020.

Sleeping is important

One of the core functionalities in libcurl is the ability to do multiple parallel transfers in the same thread. You then create and add a number of transfers to a multi handle. Anyway, I won’t explain the entire API here but the gist of where I’m going with this is that you’ll most likely sooner or later end up calling the curl_multi_poll() function which asks libcurl to wait for activity on any of the involved transfers – or sleep and don’t return for the next N milliseconds.

Calling this waiting function (or using the older curl_multi_wait() or even doing a select() or poll() call “manually”) is crucial for a well-behaving program. It is important to let the code go to sleep like this when there’s nothing to do and have the system wake up it up again when it needs to do work. Failing to do this correctly, risk having libcurl instead busy-loop somewhere and that can make your application use 100% CPU during periods. That’s terribly unnecessary and bad for multiple reasons.

Wakey wakey

When your application calls libcurl to say “sleep for a second or until something happens on these N transfers” and something happens and the application for example needs to shut down immediately, users have been asking for a way to do a wake up call.

– Hey libcurl, wake up and return early from the poll function!

You could achieve this previously as well, but then it required you to write quite a lot of extra code, plus it would have to be done carefully if you wanted it to work cross-platform etc. Now, libcurl will provide this utility function for you out of the box!

curl_multi_wakeup()

This function explicitly makes a curl_multi_poll() function return immediately. It is designed to be possible to use from a different thread. You will love it!

curl_multi_poll()

This is the only call that can be woken up like this. Old timers may recognize that this is also a fairly new function call. We introduced it in 7.66.0 back in September 2019. This function is very similar to the older curl_multi_wait() function but has some minor behavior differences that also allow us to introduce this new wakeup ability.

Credits

This function was brought to us by the awesome Gergely Nagy.

Top image by Wokandapix from Pixabay

https://daniel.haxx.se/blog/2019/12/09/this-is-your-wake-up-curl/

|

|

Marco Zehe: How to use the extended clipboard in Windows 10 |

Since the update from October 2018, (Version 1809), Windows 10 has had an extended clipboard feature. Here’s how you turn it on and use it.

Turn it on

With this feature, the last 25 text bits you copied or cut to the clipboard will be available to paste. You can either just use the clipboard history for one computer, or synchronize across multiple devices logged into the same Microsoft account. But you have to turn it on first. On each computer that should participate, do the following:

- Open Start Menu and type Settings. Press Enter to open.

- In the search box, type clipboard, then choose Clipboard Settings and press Enter.

- Tab to the first option that says to turn on Clipboard History. It might be turned on for you, in which case you don’t have to do anything. Otherwise, toggle it on using the Space bar.

- Optionally, tab forward once and turn on the toggle to use the clipboard from all devices connected to the same Microsoft account.

- Once you’ve done that, you can then choose the synchronization option for the clipboard from this device. You can choose for each device whether it should just use the common clipboard history, or also actively sync its own content with the others. So, you can have two that sync, and one that doesn’t, but can still use the contents of the other two.

Using it

To paste any of the last 25 text snippets, instead of pressing CTRL+V as usual, press WindowsKey+V instead. This will open a list, sorted from newest to oldest, of the last 25 copied or cut text snippets. Focus is on the newest. Use arrow keys right and left to choose. Press Enter to paste.

A note about Control+V behavior: After you turn on the feature, it acts normally, meaning it will paste the last text you copied as usual. The one exception is if you pasted a different snippet from the above steps. From that moment on, until you either choose a different item or copy or cut some new text, CTRL+V will paste the last item you chose from that list of 25 snippets.

A warning to users of the NVDA screen reader: There seems to be a bug in the way NVDA interacts with this feature, and it is in all versions I’ve tested up to the upcoming spring 2020 release (2004) and NVDA 2019.3 beta 1. Sometimes after pasting, the control key appears to be stuck software-wise. It is as if it is being held down continuously, meaning arrow keys will suddenly move by word or paragraph instead of by character or line. It is intermittent. If it happens, just press the Control key once to rectify the situation.

Pinning or deleting items

In the Clipboard History window, you can pin items that you paste frequently. You can also delete a single or all items from the history.

To do this, open the Clipboard History window with WindowsKey+V, and select an item. Next, press Tab once to focus the More button. Press Space to open the popup menu. here, you have the Pin and Delete plus Delete All items available.

Note that there is another bug in NVDA where it does not interact with both the button and the menu properly at the time of this writing. I have informed the NVDA team about both issues.

Closing remark

This is, in my opinion, by far one of the best features Microsoft introduced to Windows in recent years. It has made me so much more productive and made so many tasks easier that I cannot even begin to count them. And whenever I am at a device that doesn’t have this feature, I ask myself how I could ever live without it.

I hope you’ll find it just as useful. Have fun playing with it!

https://marcozehe.de/2019/12/08/how-to-use-the-extended-clipboard-in-windows-10/

|

|

Marco Zehe: The myth of getting rich through ads |

Some of you may have noticed that since May, my blog is displaying ads here and there. It was kind of an experiment, and here are my conclusions after six months.

When I got sick in March, I came down so hard that it wasn’t clear for a long time whether I would recover enough to be able to remain fully employed. More on that at another time maybe. However, this led to my looking at my then current blog situation. I ran three blogs. This one, a German technically focused and one where I blogged about some private stuff. All of them ran WordPress, a bunch of custom plugins because at some point I had obted out of the JetPack services and wanted to replace the functionality all with extra plugins. This resulted in constant stress of having to maintain WordPress itself, especially when major version upgrades came out, and plugins and themes. The worst was when one plugin at one point got updated and started pulling in malicious third-party scripts which broke the blogs completely. That was already when I was sick. I ended up just disabling that damn thing and not look for a replacement.

In addition, the web hosting was expensive, but not really performant. And they often let essential software get out of date. My WordPress at some point had started complaining because my PHP version was too old. Turned out that the defaults for shared hosts were not upgraded to a newer version by default by the hoster, and one had to go into an obnoxious backend to fiddle with some setting somewhere to use a newer version of PHP.

I then decided to try something completely new. I exported the contents of my three blogs and set up blogs at WordPress.com, the hosted WordPress offering from the makers themselves: Automattic. I looked at their plans, and the Premium plan, which cost me 8€ per month, per blog felt suitable. I also took the opportunity to pull both German language blogs together into one. I just added two categories that those who just want to see my tecnical stuff, or the private stuff, could still do so.

With that move, I got a good set of features that I would normally use on a self-hosted blog as well, so I set up some widgets, some theme that comes with the plan, and imported all my content including comments and such. I lost my statistics from the custom plugins, but hey, I had lost years of statistics from before that when I decided to no longer use JetPack on my self-hosted blogs, too, so what.

And I did two more things. I added a “Buy me a coffee” button so people could show their appreciation for my content if they wanted to. And I opted into the Word Ads program, that would display some advertisement on the blog’s main page and below each individual post. I simply wanted to see if my content would be viable enough to generate any significant enough income.

Problems

The quick answer is: No, it isn’t. Since I started displaying ads in May, until the end of October, this blog has generated $21.52 of ad income. The German blog is even better, it generated a whopping $0.35 in the same time period. The minimum amount for Automattic to generate a payout is $100. So this blog would effectively have to run two more years before I would see the first payout. The German blog much much much longer.

For full transparency: The German language readers have bought me coffee at a value of about 50$, and I just received the first 25$ on this blog in December, by one generous donor who bought me five at once.

And there are real problems with some of the ads that are being displayed, which put me in a moral dilemma. Some of these could be perceived as really offensive to some people or mindsets. The trouble is that I have no control over the ads that get shown. They are geographically tailored, and they are from a network I cannot make adjustments to.

Automattic do have a mechanism to report offensive ads. However, this requires that those readers who see such ads send me screenshots of said ads when they encounter them. I, myself, when logged in, do not see any ads because I am a subscriber on a paid plan. And all paid plans come without ads. But I have to be the one to report those ads to Automattic. In other words, a real chore to deal with.

If I wanted to switch to a service like Google Adsense, which would require me to install a third-party plugin, I would have to upgrade to the business plan at a whopping $25 per month per blog.

Consequences

As a consequence, once my extended advent calendar and the Christmas festivities are over, I will move blog contents once again. This time, I will move to a different web host, with more control and a very friendly, very technical, engaged team. I will run two self-hosted blogs and once again use the JetPack integration for some of the features I really like about WordPress. But I will stop displaying ads. It is clear that this is not a sustainable model for the type of content I generate. And you, the readers, deserve that this will once again be a safe space where you are not suddenly confronted with some sketchy ad content.

I was lucky enough to be able to return to work after all. But this episode clearly has shown that, despite precautions, such things can happen to me again. They did before. If that should one day be the case, and I need to generate some income through writing, the model will have to be different. There are some ideas, but since they are currently not a pressing matter, they are not more than that, ideas.

Comparison with more heavy traffic

When I compare my experience to that of my wife, who runs both a guide and a forum for the popular Sims FreePlay game in Germany, it is clear that even she with her thousands of visitors to both the guide and forum does not always generate enough traffic to get the minimum Google Adsense payout threshold per month. And that is just enough to cover her monthly domain and server costs, because the traffic is so heavy that shared hosting cannot cope. So she has to run a dedicated v server for those, which are way more expensive than shared hosting.

So, ads on the web are really not a sustainable model for many. Yes, there may be some very popular and widespread 8content-wise) blogs or publication sites that do generate enough revenue through ads. But the more niche your topic gets, if you don’t generate thousands of visitors per month, ads sometimes may cover the costs of a service like WordPress to run your blog, but only if you are on one of the lower plans with less control over what your blog can do or the ads that are being displayed.

I believe that a more engaged interaction with the actual audience is a better way to generate revenue, although that, of course, also depends on readers loyalty and your own dedication. I think that initiatives like Grant For The Web are the future of monetisation of content on the web, and I may start supporting that once my move back to self-hosting is complete. I’ll keep you posted.

https://marcozehe.de/2019/12/07/the-myth-of-getting-rich-through-ads/

|

|

Marco Zehe: Do's and don'ts on Hamburger menus |

Today, just a quick reading tip for you. Michael Scharnagl posted a great article on Wednesday about when to use Hamburger menus, when not to use them, and what to consider for each decision. And that includes accessibility. Thank you, Michael!

https://marcozehe.de/2019/12/06/dos-and-donts-on-hamburger-menus/

|

|

Daniel Stenberg: A 25K commit gift |

The other day we celebrated curl reaching 25,000 commits, and just days later I received the following gift in the mail.

The text found in that little note is in Swedish and a rough translation to English makes it:

Twenty-five thousand thanks for curl. The cake delivery failed so here is a commit mascot and some new bugs to squash.

I presumed the cake reference was a response to a tweet of mine suggesting cake would be a suitable way to celebrate this moment.

The gift arrived without any clue who sent it, but when I tweeted this image, my mystery friend Filip soon revealed himself:

Thank you Filip!

(Top image by Arek Socha from Pixabay)

|

|

Daniel Stenberg: curl speaks etag |

The

ETagHTTP response header is an identifier for a specific version of a resource. It lets caches be more efficient and save bandwidth, as a web server does not need to resend a full response if the content has not changed. Additionally, etags help prevent simultaneous updates of a resource from overwriting each other (“mid-air collisions”).

That’s a quote from the mozilla ETag documentation. The header is defined in RFC 7232.

In short, a server can include this header when it responds with a resource, and in subsequent requests when a client wants to get an updated version of that document it sends back the same ETag and says “please give me a new version if it doesn’t match this ETag anymore”. The server will then respond with a 304 if there’s nothing new to return.

It is a better way than modification time stamp to identify a specific resource version on the server.

ETag options

Starting in version 7.68.0 (due to ship on January 8th, 2020), curl will feature two new command line options that makes it easier for users to take advantage of these features. You can of course try it out already now if you build from git or get a daily snapshot.

The ETag options are perfect for situations like when you run a curl command in a cron job to update a file if it has indeed changed on the server.

--etag-save

Issue the curl command and if there’s an ETag coming back in the response, it gets saved in the given file.

--etag-compare

Load a previously stored ETag from the given file and include that in the outgoing request (the file should only consist of the specific ETag “value” and nothing else). If the server deems that the resource hasn’t changed, this will result in a 304 response code. If it has changed, the new content will be returned.

Update the file if newer than previously stored ETag:

curl --etag-compare etag.txt --etag-save etag.txt https://example.com -o saved-file

If-Modified-Since options

The other method to accomplish something similar is the -z (or --time-cond) option. It has been supported by curl since the early days. Using this, you give curl a specific time that will be used in a conditional request. “only respond with content if the document is newer than this”:

curl -z "5 dec 2019" https:/example.com

You can also do the inversion of the condition and ask for the file to get delivered only if it is older than a certain time (by prefixing the date with a dash):

curl -z "-1 jan 2003" https://example.com

Perhaps this is most conveniently used when you let curl get the time from a file. Typically you’d use the same file that you’ve saved from a previous invocation and now you want to update if the file is newer on the remote site:

curl -z saved-file -o saved-file https://example.com

Tool, not libcurl

It could be noted that these new features are built entirely in the curl tool by using libcurl correctly with the already provided API, so this change is done entirely outside of the library.

Credits

The idea for the ETag goodness came from Paul Hoffman. The implementation was brought by Maros Priputen – as his first contribution to curl! Thanks!

|

|