Добавить любой RSS - источник (включая журнал LiveJournal) в свою ленту друзей вы можете на странице синдикации.

Исходная информация - http://planet.mozilla.org/.

Данный дневник сформирован из открытого RSS-источника по адресу http://planet.mozilla.org/rss20.xml, и дополняется в соответствии с дополнением данного источника. Он может не соответствовать содержимому оригинальной страницы. Трансляция создана автоматически по запросу читателей этой RSS ленты.

По всем вопросам о работе данного сервиса обращаться со страницы контактной информации.

[Обновить трансляцию]

Fr'ed'eric Harper: Coder pour l’amour de Montr'eal |

Le 28 et 29 mai prochain, donc cette semaine, aura le lieu le hackathon Code(Love) `a Montr'eal `a la Maison Notman. Avec l’id'ee de cr'eer des r'eponses concr`etes aux d'efis sociaux, les participants de tout acabit seront appel'es `a aider notre ville en choisissant l’un des trois th`emes: femmes, jeunesse et d'efi ONE DROP. Je n’aurais malheureusement pas le temps de passer les deux jours entiers avec les participants, mais je serais sur place tout au moins lors du premier jour en matin'ee pour parler de Firefox OS:

HTML5 est un pas de g'eant dans la bonne direction: il apporte plusieurs fonctionnalit'es dont les d'eveloppeurs avaient besoin pour cr'eer plus facilement de meilleures exp'eriences web. Il a aussi fait naitre un d'ebat sans fin: applications natives ou applications web! Lors de cette pr'esentation, Fr'ed'eric Harper vous montrera comment le web ouvert peut vous aider `a cr'eer des applications mobiles de qualit'es. Vous en apprendrez plus sur des technologies telles que les WebAPIs, ainsi que les outils qui vous permettront de viser un nouveau march'e avec Firefox OS et le web d’aujourd’hui.

L’id'ee 'etant de pr'esenter HTML comme la technologie de choix pour les applications que les pirates vont cr'eer durant l’'ev'enement, mais aussi, `a la demande des organisateurs, montrer la puissance d’HTML5 `a travers Firefox OS. Avec le prix de ces t'el'ephones, aucun doute que ces appareils aident diff'erentes villes `a r'epondent `a ces fameux d'efis sociaux, alors pourquoi pas `a Montr'eal! On se voit lors du hackathon: n’oubliez pas de r'eserver votre place et de cr'eer un projet tout en joignant une 'equipe existante au besoin. Bon hacking…

--

Coder pour l’amour de Montr'eal is a post on Out of Comfort Zone from Fr'ed'eric Harper

Related posts:

- HTML5 sur les st'ero"ides `a HTML5mtl C’est avec un immense plaisir que je pr'esenterais pour la...

- FOXHACK, un hackathon Firefox OS `a Montr'eal Le samedi 28 septembre prochain aura lieu un hackathon Firefox...

- Firefox OS `a HTML5mtl Mardi soir avait lieu la rencontre mensuelle d’HTML5mtl et j’ai...

|

|

Selena Deckelmann: Personal email sabbatical, July 10 – October 15 2014 |

Starting July 10, 2014, I’m planning to take a personal email sabbatical.

I’m going to unsubscribe from all mailing lists and redirect all email to my personal email addresses (chesnok.com and gmail.com) to /dev/null.

I have a couple open source project addresses that will probably be redirected to people responsible for those areas during my sabbatical. The traffic on those addresses is so low, however, that this is likely not going to impact anyone significantly.

I will not be reachable via personal email until sometime around October 15, 2014.

Why I’m doing this:

I spend too much time responding to things that other people want me to do.

I have a full-time job where I do that already, and as a result, much of my personal time where I could be creative is spent in the service of others. I’m also about to have a baby! So, it seems like as good a time as any to make an explicit departure from the communication medium that’s been the focus of my life since about 1995.

I’m not sure that I will return to having a public email address outside of work.

I plan to continue blogging, although using a much simplified blogging platform that I’ve written (but haven’t deployed yet :D). I plan to continue to use Twitter, although I’ve separated public and private accounts. I do not plan to increase my use of other social media. I plan to continue to use IRC, although will likely take myself out of most project channels. I’ve removed Twitter from my phone, and plan to remove email from it in July.

I’m taking a cue from danah boyd, although not following her prescription for a lengthy advance notice to collaborators.

I spent the last six months substantially downsizing my volunteer commitments, spending more time on in-person events that are local. I am pretty sure most people won’t even notice my extended volunteer absence on the “greater internet”. I’ll trickle out some individual notices as needed, probably to anyone I’ve had more than three exchanges with in the last two or three years.

|

|

Matt Thompson: Help test new Webmaker Badges |

(Cross-posted from the Mozilla Webmaker blog)

Will you take a few minutes to help beta test the new Super Mentor and Hive Community Member badges on staging? Then make them better by filing bugs or sharing feedback on our newsgroup.

Once these two new badges are tested and ready, they’ll unlock new privileges for community members and help us prep for Maker Party. But first: we need your help to kick the tires and test out the software.

What do we want you to test?

We want you to apply for these badges, and then display them on your Webmaker profile. Plus, for Super Mentors and admins, we need to test the process for logging in to BadgeKit, reviewing badge applications, and then issuing them. Step-by-step instructions for all that are below.

We’re just testing using fake data — none of these badges will count “for real”

You’ll be testing using our staging sandbox, not the live Webmaker.org web site. So none of the badges you apply for, issue or receive will count “for real” — we’re just testing out the flow and overall process.

What badges are ready for testing?

- The Webmaker Super Mentor badge. This badge is for mentors who have gone above and beyond in contributing to the Webmaker project. Later this summer, Super Mentors will have the ability to issue Webmaker Mentor badges and new Web Literacy badges.

- The Hive Community Member badge. This badge is for active Hive Network members that have demonstrated abilities in Peer Observation, Resource Sharing, and Process Documentation.

How do I apply for them?

Click the Apply button on either of these pages:

Where will my badges show up?

On your Webmaker profile page. You can find your profile page at a URL like this one — just replace “[username]” with your own Webmaker username: https://webmaker.mofostaging.net/user/#!/[username]

For example, this is k88hudson’s profile page.

How do I issue badges?

You must first have a Webmaker Super Mentor badge or be an administrator. To issue the Hive Community Member badge, make sure you are signed in to Persona. Click the “Issue badge” button on that page.

How do I approve badge applications?

You must have a Webmaker Super Mentor badge or be an administrator to approve badge applications. Here’s how to do it:

1) Log into Badgekit with Persona. Click on the “Applications” tab.

(If you don’t see this tab or any badge applications, contact @k88hudson or @echristensen on IRC to add you to the admin group.)

2) Click on the +[number] button to see all badge applications

3) In the “Criteria” tab, make sure you click ‘Meets criterion‘ for each criterion.

4) In the “Finish” tab, click “Submit Review” to submit your review.

I’m a Webmaker or Hive administrator. What else can I do to help test?

First, make sure you have a Webmaker admin account on mofostaging. There should be a little “admin” symbol next to your username in the top right corner of the staging site. If there isn’t, ask @k88hudson, @cade or @jbuck in #webmaker on IRC to make you an admin.

Go to https://webmaker.mofostaging.net/admin/badges to see a list of badges.

Click on a badge. Try issuing a Hive Community Member badge, or a Webmaker Super Mentor badge by using the ‘issue new badge’ input.

You can also try deleting and re-issuing a badge to yourself through the admin interface.

What’s next? When is this shipping to the live site?

We’re planning to ship everything you see here to the live site by June 13. For a full roadmap of tasks, milestones and deliverables see https://wiki.mozilla.org/Webmaker/Badges

http://openmatt.org/2014/05/26/help-test-new-webmaker-badges/

|

|

David Rajchenbach Teller: Shutting down Asynchronously, part 2 |

During shutdown of Firefox, subsystems are closed one after another. AsyncShutdown is a module dedicated to express shutdown-time dependencies between:

- services and their clients;

- shutdown phases (e.g. profile-before-change) and their clients.

Barriers: Expressing shutdown dependencies towards a service

Consider a service FooService. At some point during the shutdown of the process, this service needs to:

- inform its clients that it is about to shut down;

- wait until the clients have completed their final operations based on FooService (often asynchronously);

- only then shut itself down.

This may be expressed as an instance of AsyncShutdown.Barrier. An instance of AsyncShutdown.Barrier provides:

- a capability client that may be published to clients, to let them register or unregister blockers;

- methods for the owner of the barrier to let it consult the state of blockers and wait until all client-registered blockers have been resolved.

Shutdown timeouts

By design, an instance of AsyncShutdown.Barrier will cause a crash if it takes more than 60 seconds awake for its clients to lift or remove their blockers (awake meaning that seconds during which the computer is asleep or too busy to do anything are not counted). This mechanism helps ensure that we do not leave the process in a state in which it can neither proceed with shutdown nor be relaunched.

If the CrashReporter is enabled, this crash will report: – the name of the barrier that failed; – for each blocker that has not been released yet:

- the name of the blocker;

- the state of the blocker, if a state function has been provided (see AsyncShutdown.Barrier.state).

Example 1: Simple Barrier client

The following snippet presents an example of a client of FooService that has a shutdown dependency upon FooService. In this case, the client wishes to ensure that FooService is not shutdown before some state has been reached. An example is clients that need write data asynchronously and need to ensure that they have fully written their state to disk before shutdown, even if due to some user manipulation shutdown takes place immediately.

// Some client of FooService called FooClient

Components.utils.import("resource://gre/modules/FooService.jsm", this);

// FooService.shutdown is the `client` capability of a `Barrier`.

// See example 2 for the definition of `FooService.shutdown`

FooService.shutdown.addBlocker(

"FooClient: Need to make sure that we have reached some state",

() => promiseReachedSomeState

);

// promiseReachedSomeState should be an instance of Promise resolved once

// we have reached the expected state

Example 2: Simple Barrier owner

The following snippet presents an example of a service FooService that wishes to ensure that all clients have had a chance to complete any outstanding operations before FooService shuts down.

// Module FooService

Components.utils.import("resource://gre/modules/AsyncShutdown.jsm", this);

Components.utils.import("resource://gre/modules/Task.jsm", this);

this.exports = ["FooService"];

let shutdown = new AsyncShutdown.Barrier("FooService: Waiting for clients before shutting down");

// Export the `client` capability, to let clients register shutdown blockers

FooService.shutdown = shutdown.client;

// This Task should be triggered at some point during shutdown, generally

// as a client to another Barrier or Phase. Triggering this Task is not covered

// in this snippet.

let onshutdown = Task.async(function*() {

// Wait for all registered clients to have lifted the barrier

yield shutdown.wait();

// Now deactivate FooService itself.

// ...

});

Frequently, a service that owns a AsyncShutdown.Barrier is itself a client of another Barrier.

Example 3: More sophisticated Barrier client

The following snippet presents FooClient2, a more sophisticated client of FooService that needs to perform a number of operations during shutdown but before the shutdown of FooService. Also, given that this client is more sophisticated, we provide a function returning the state of FooClient2 during shutdown. If for some reason FooClient2’s blocker is never lifted, this state can be reported as part of a crash report.

// Some client of FooService called FooClient2

Components.utils.import("resource://gre/modules/FooService.jsm", this);

FooService.shutdown.addBlocker(

"FooClient2: Collecting data, writing it to disk and shutting down",

() => Blocker.wait(),

() => Blocker.state

);

let Blocker = {

// This field contains information on the status of the blocker.

// It can be any JSON serializable object.

state: "Not started",

wait: Task.async(function*() {

// This method is called once FooService starts informing its clients that

// FooService wishes to shut down.

// Update the state as we go. If the Barrier is used in conjunction with

// a Phase, this state will be reported as part of a crash report if FooClient fails

// to shutdown properly.

this.state = "Starting";

let data = yield collectSomeData();

this.state = "Data collection complete";

try {

yield writeSomeDataToDisk(data);

this.state = "Data successfully written to disk";

} catch (ex) {

this.state = "Writing data to disk failed, proceeding with shutdown: " + ex;

}

yield FooService.oneLastCall();

this.state = "Ready";

}.bind(this)

};

Example 4: A service with both internal and external dependencies

// Module FooService2

Components.utils.import("resource://gre/modules/AsyncShutdown.jsm", this);

Components.utils.import("resource://gre/modules/Task.jsm", this);

Components.utils.import("resource://gre/modules/Promise.jsm", this);

this.exports = ["FooService2"];

let shutdown = new AsyncShutdown.Barrier("FooService2: Waiting for clients before shutting down");

// Export the `client` capability, to let clients register shutdown blockers

FooService2.shutdown = shutdown.client;

// A second barrier, used to avoid shutting down while any connections are open.

let connections = new AsyncShutdown.Barrier("FooService2: Waiting for all FooConnections to be closed before shutting down");

let isClosed = false;

FooService2.openFooConnection = function(name) {

if (isClosed) {

throw new Error("FooService2 is closed");

}

let deferred = Promise.defer();

connections.client.addBlocker("FooService2: Waiting for connection " + name + " to close", deferred.promise);

// ...

return {

// ...

// Some FooConnection object. Presumably, it will have additional methods.

// ...

close: function() {

// ...

// Perform any operation necessary for closing

// ...

// Don't hoard blockers.

connections.client.removeBlocker(deferred.promise);

// The barrier MUST be lifted, even if removeBlocker has been called.

deferred.resolve();

}

};

};

// This Task should be triggered at some point during shutdown, generally

// as a client to another Barrier. Triggering this Task is not covered

// in this snippet.

let onshutdown = Task.async(function*() {

// Wait for all registered clients to have lifted the barrier.

// These clients may open instances of FooConnection if they need to.

yield shutdown.wait();

// Now stop accepting any other connection request.

isClosed = true;

// Wait for all instances of FooConnection to be closed.

yield connections.wait();

// Now finish shutting down FooService2

// ...

});

Phases: Expressing dependencies towards phases of shutdown

The shutdown of a process takes place by phase, such as: – profileBeforeChange (once this phase is complete, there is no guarantee that the process has access to a profile directory); – webWorkersShutdown (once this phase is complete, JavaScript does not have access to workers anymore); – …

Much as services, phases have clients. For instance, all users of web workers MUST have finished using their web workers before the end of phase webWorkersShutdown.

Module AsyncShutdown provides pre-defined barriers for a set of well-known phases. Each of the barriers provided blocks the corresponding shutdown phase until all clients have lifted their blockers.

List of phases

AsyncShutdown.profileChangeTeardown

The client capability for clients wishing to block asynchronously during observer notification “profile-change-teardown”.

AsyncShutdown.profileBeforeChange

The client capability for clients wishing to block asynchronously during observer notification “profile-change-teardown”. Once the barrier is resolved, clients other than Telemetry MUST NOT access files in the profile directory and clients MUST NOT use Telemetry anymore.

AsyncShutdown.sendTelemetry

The client capability for clients wishing to block asynchronously during observer notification “profile-before-change2”. Once the barrier is resolved, Telemetry must stop its operations.

AsyncShutdown.webWorkersShutdown

The client capability for clients wishing to block asynchronously during observer notification “web-workers-shutdown”. Once the phase is complete, clients MUST NOT use web workers.

http://dutherenverseauborddelatable.wordpress.com/2014/05/26/shutting-down-asynchronously-part-2/

|

|

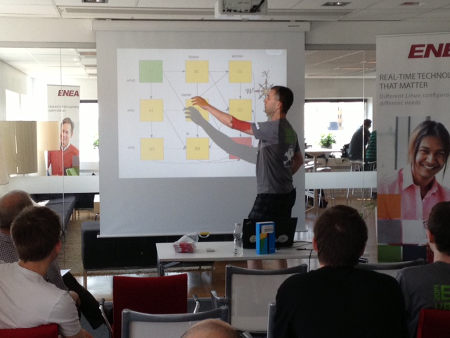

Daniel Stenberg: Hacking embedded day 2014 |

Once again our gracious sponsor Enea hosted an embedded hacking day arranged through foss-sthlm – the third time in three years at the same place with the same host. Fifty something happy hackers brought their boards, devices, screens, laptops and way too many cables to the place on a Saturday to spend it in the name of embedded systems. With no admission fee at all. Just bring your stuff, your skills and enjoy the day.

This happened on May 24th and on the outside of the windows we could identify one of the warmest and nicest spring/summer days so far this year in Stockholm. But hey, if you want to get some fun hacks done we mustn’t let those real-world things hamper us!

All attendees were given a tshirt and then they found themselves a spot somewhere in the crowd and the socializing and hacking could start. I got the pleasure of loudly interrupting everyone once in a while to say welcome or point out that a talk was about to begin…

tshirt and then they found themselves a spot somewhere in the crowd and the socializing and hacking could start. I got the pleasure of loudly interrupting everyone once in a while to say welcome or point out that a talk was about to begin…

We also collected random fun hardware pieces donated to us by various people for a hardware raffle. More about that further down.

Talk

To spice up the day of hacking, we offered some talks. First out was…

Bluetooth and Low Power radio by Mats Karlsson and during this session we got to learn a bunch about hacking extremely low power devices and doing radio for them with Arduinos and more.

We hadn’t much more than started but the clock showed lunch time and we were served lunch!

Contest

Readers of my blog and previous attendees of any of the embedded hacking days I’ve been organizing should be familiar with the embedded Linux contests I’ve made.

Lately I’ve added a new twist to my setup and I tested it previously when I visited foss-gbg and ran a contest there.

Basically it is a complicated maze/track that you walk through by answering questions, and when you reach the goal you have collected a set of words along the way. Those words should then be rearranged to form a question and that final question should be answered as fast as possible.

Since I already blogged and publicized my previous “Parallell Spaghetti Decode” contest I of course had a new map this time and I altered the set questions a bit as well, even if participants in the latter can find similarities in the previous one. This kind of contest is a bit complicated so for this I hand out the play field and the questions on two pieces of paper to each participant.

After only a little over seven minutes we had a winner, Yann Vernier, who could walk home with a brand new Nexus 7 32GB. The prize was, as so much else this day, donated by Enea.

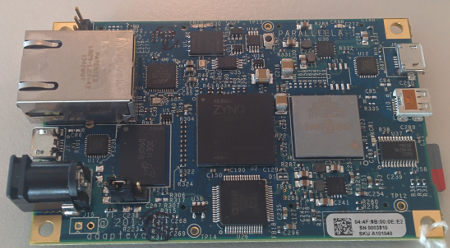

Hardware Raffle

I donated my Arduino Nano (never even unpacked) and a Raspberry Pi with SD-card that I never use. Enea pooled in with a Parallella board and we got an RFduino and a Texas Instrument Stellaris ARM-based little round robot. The Parallella board was the by far most popular device (as expected really) with the Stellaris board as second. Only 12 people signed up for the Rpi…

Everyone who was interested in one of the 5 devices signed up on a list, marking each thing of interest.

.

We then put little pieces of paper with numbers on them in a big bowl and I got to draw 5 numbers (representing different individuals) who then won the devices. It of course turned out we did it in a complicated way that I had did some minor mistakes in to add to the fun. In the end I believe it was at least a fair process that didn’t give any favors or weight in any particular way. I believe we got 5 happy winners.

Workshop

“How to select hardware” was the name of the workshop I lead. Basically it was a one hour group discussion around how to buy, find, order, deal with, not do, avoid when looking for hardware for your (hobby) project. Discussions around brands, companies, sites, buying from China or Ebay, reading reviews, writing reviews, how people do when they buy things when building stuff of their own.

I think we had some good talks and lots of people shared their experiences, stories and some horror stories. Hopefully we all brought a little something with us back from that…

After that we refilled our coffee mugs and indulged in the huge and tasty muffins that magically had appeared.

Something we had learned from previous events was to not “pack” too many talks and other things during the day but to also allow everyone to really spend time on getting things done and to just stroll around and talk with others.

Instead of a third slot for a talk or another workshop we had a little wishlist in our wiki for the day, and as a result I managed to bully Bj"orn Stenberg to the room where he then described his automated system for his warming cupboard (v"armeskap) which basically is a place to dry clothes. Bj"orn has perfected his cupboard’s ability to dry clothes and also shut off/tell him when they are dry and not waste energy by keep on warming using damp sensors, Arduinos and more.

With that we were in the final sprint for the day. The last commits were made. The final bragging comments described blinking leds. Cables were detached. Bags were filled will electronics. People started to drop off.

Only a few brave souls stayed to the very end. And they celebrated in style.

I had a great day, and I received several positive comments and feedback from participants. I hope we’ll run a similar event again soon, it’d be great to keep this an annual tradition.

The pictures in this blog post are taken by: me, Jon Aldama, Annica Spangholt, Magnus Sandberg and J"amtlands bryggerier. Thanks! More pictures can also be found in Enea’s blog posting and in the Google+ event.

Thanks also to Jon, Annica and Sofie for the hard hosting work during the event. It made everything run smooth and without any bumps!

http://daniel.haxx.se/blog/2014/05/26/hacking-embedded-day-2014/

|

|

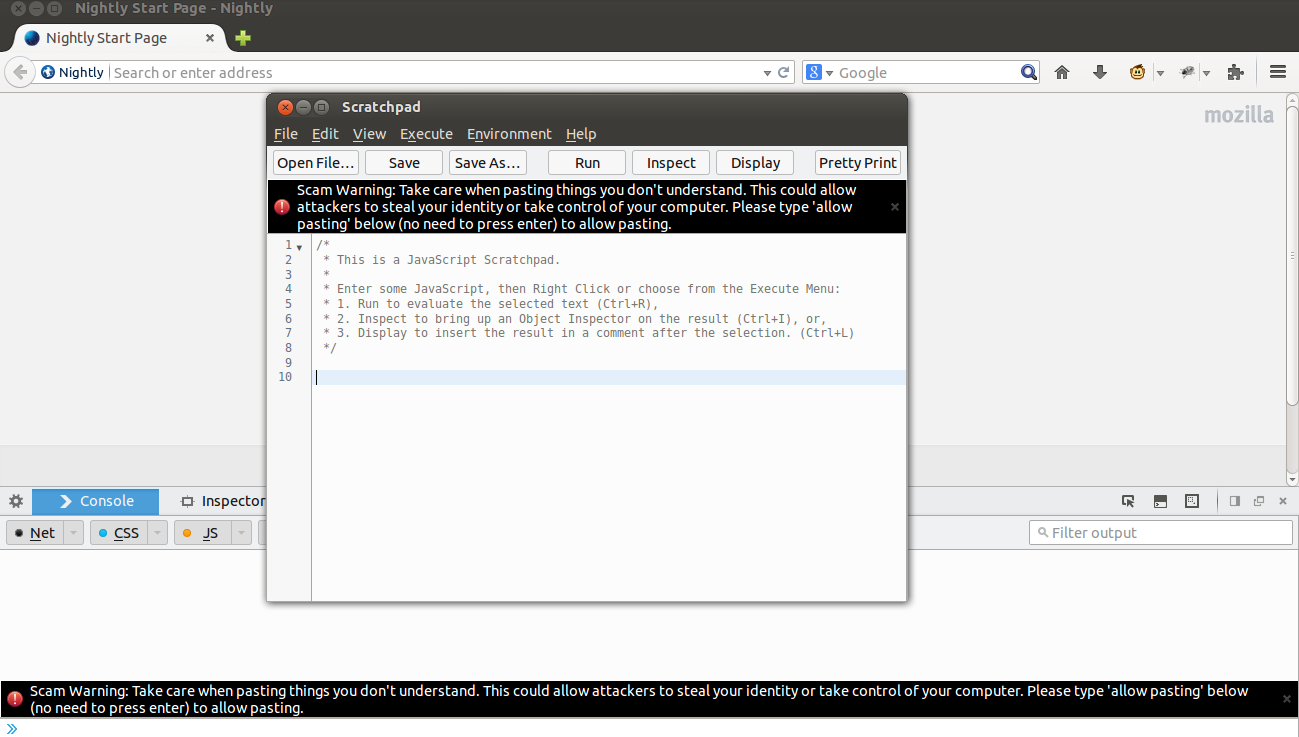

Yunier Jos'e Sosa V'azquez: Preferencias “in content” para Firefox en la versi'on Nightly |

Las preferencias “in content” o dentro del navegador traducido al espa~nol han llegado al canal Nightly de Firefox y debutar'a dentro de algunos meses para millones de usuarios. Esto significa que al configurar las opciones de Firefox no se abrir'a una ventana sino, una nueva pesta~na con las mismas funcionalidades que la ventana actual.

El intento para unificar las ventanas en Firefox no es nuevo, data desde el desarrollo de Firefox 4 cuando Mozilla dejaba ver uno de sus grandes cambios en la interfaz del panda rojo.

Del lado izquierdo tenemos todas las opciones con sus respectivas configuraciones al lado derecho. En el caso de Avanzado, una l'inea anaranjada nos indica la “pesta~na” donde nos encontramos.

Si desean probar esta funcionalidad pueden activarla de la siguiente forma:

Si desean probar esta funcionalidad pueden activarla de la siguiente forma:

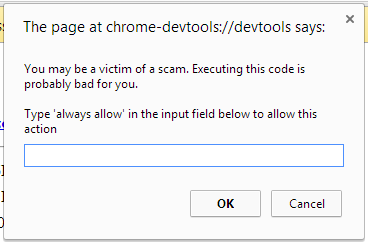

- Abrir about:config y aceptar la alerta de seguridad.

- Buscar browser.preferences.inContent y ponerlo true

Y listo, ya tendr'as preferencias “in content”, si lo prueban en versiones inferiores a la 32 encontrar'an otra interfaz, por que para probarla les recomiendo descargar la versi'on Nightly de nuestra zona de Descargas. ?Qu'e piensan al respecto? ?Les gusta?

http://firefoxmania.uci.cu/preferencias-in-content-para-firefox-en-la-version-nightly/

|

|

Robert O'Callahan: Unnecessary Dichotomy |

As the scope of the open Web has expanded we've run into many hard issues such as DRM, support for patented video codecs, and applications needing APIs that increase fingerprintability. These issues are easily but incorrectly framed as choices between "principles" and "pragmatism" --- the former prioritizing Mozilla's mission, the latter prioritizing other things such as developer friendliness for the Web platform and Firefox market share. This framing is incorrect because the latter are essential components of our strategy for pursuing our mission.

For example I believe the optimal way to pursue our goal of unencumbered video codecs is neither to completely rely on platform video codecs (achieving nothing for our goal) nor to refuse to support all patent-encumbered codecs (in the current market, pushing almost all users and developers to avoid Firefox for video playback). Instead it is somewhere in between --- hopefully somewhere close to our current strategy of supporting H.264 in various ways while we support VP8/VP9 and develop an even better free codec, Daala. If we deliberately took a stance on video that made us irrelevant, then we would fail to make progress towards our goal and we would have actually betrayed our mission rather than served it.

Therefore I do not feel any need to apologize for our positions on encumbered codecs, DRM and the like. The positions we have taken are our best estimate of the optimal strategy for pursuing our mission. Our strategy will probably turn out to be suboptimal in some way, because this is a very difficult optimization problem, requiring knowledge of the future ... but we don't need to apologize for being unomniscient either.

A related problem is that our detractors tend to view our stance on any given issue in isolation, whereas we really face a global optimization problem that spans a huge range of issues. For example, when developers turn away from the Web platform for any reason, the Web as a whole is diminished, and likewise when users turn away from Firefox for any reason our influence of most issues is diminished. So the negative impact of taking a "hyper-principled" stand on, say, patent-encumbered video codecs would be felt across many other issues Mozilla is working on.

Having said all that, we need to remain vigilant against corruption and lazy thinking, so we need keep our minds open to people who complain we're veering too much in one direction or another. In particular I hope we continue to recruit contributors into our community who disagree with some of the things we do, because I find it much easier to give credence to contributors than to bystanders.

http://robert.ocallahan.org/2014/05/unnecessary-dichotomy.html

|

|

Rob Hawkes: Help me throw myself off a 17-story helipad for charity |

On the 22nd of June (less than a month) I’m going to climb 17-floors to the very top of London's Air Ambulance helipad. I’ve decided it's going to be a great idea to then throw myself off the edge and abseil 56 metres back down to the ground, without a wall or anything to hang onto! Just me, a rope, and the ground.

You can support me by donating right now, or read on to find out a little more.

Are you crazy?

Probably. I'm doing it partly because it’s going to be absolutely terrifying, but also because I didn’t realise London’s Air Ambulance was a charity and relied on donations (less than 30% is funded from the NHS). What I also didn’t realise is that London is the only major city in the world with a single medical emergency helicopter. That’s just nuts. Donations help them raise funds for the new helicopter and also ensure they have all the day-to-day equipment needed to save lives in the city.

Support me and London's Air Ambulance

I’m trying to raise lb500 and it’d be great if you can help me get there. I promise to take as many photos as I can of me looking terrified as a dangle over the East London skyline.

You can support me through my JustGiving page. Please don't wait for others to donate first. As Tesco would say, every little helps!

It would be even more amazing if you could share this with your friends and help raise even more money!

More about London’s Air Ambulance

- It's been running since 1989, serving 28,500 missions to date

- They provide life-saving medical interventions, such as open chest surgery, blood transfusion, and anaesthesia, all at the roadside!

- They serve the 10 million people living and working within the M25

- It's a charity that relies on donations to keep running

- Less than 30% of its funds come from the NHS

- London is the only major city with a single emergency medical helicopter, hence needing a second one

You can find out more on the official website, and don't forget to support me by donating!

Photos are from London's Air Ambulance website and Twitter account.

http://feedproxy.google.com/~r/rawkes/~3/d9WAsLEVyA8/londons-air-ambulance-abseil

|

|

Jennifer Boriss: Try Out Fira Sans: a Free, Open Source Typeface Commissioned by Mozilla |

|

|

Nick Cameron: Rust for C++ programmers - part 7: data types |

Structs

A rust struct is similar to a C struct or a C++ struct without methods. Simply a list of named fields. The syntax is best seen with an example:

struct S {

field1: int,

field2: SomeOtherStruct

}

Here we define a struct called `S` with two fields. The fields are comma separated; if you like, you can comma-terminate the last field too.

Structs introduce a type. In the example, we could use `S` as a type. `SomeOtherStruct` is assumed to be another struct (used as a type in the example), and (like C++) it is included by value, that is, there is no pointer to another struct object in memory.

Fields in structs are accessed using the `.` operator and their name. An example of struct use:

fn foo(s1: S, s2: &S) {

let f = s1.field1;

if f == s2.field1 {

println!("field1 matches!");

}

}

Here `s1` is struct object passed by value and `s2` is a struct object passed by reference. As with method call, we use the same `.` to access fields in both, no need for `->`.

Structs are initialised using struct literals. These are the name of the struct and values for each field. For example,

fn foo(sos: SomeOtherStruct) {

let x = S { field1: 45, field2: sos }; // initialise x with a struct literal

println!("x.field1 = {}", x.field1);

}

Structs cannot be recursive, that is you can't have cycles of struct names involving definitions and field types. This is because of the value semantics of structs. So for example, `struct R { r: Option

struct R {

r: Option>

}

(Note, `Box` is the new syntax for a unique/owning pointer, this has changed from `~R` which was introduced a few posts back).

If we didn't have the `Option` in the above struct, there would be no way to instantiate the struct and Rust would signal an error.

Structs with no fields do not use braces in either their definition or literal use. Definitions do need a terminating semi-colon though, presumably just to facilitate parsing.

struct Empty;

fn foo() {

let e = Empty;

}

Tuples

Tuples are anonymous, heterogeneous sequences of data. As a type, they are declared as a sequence of types in parentheses. Since there is no name, they are identified by structure. For example, the type `(int, int)` is a pair of integers and `(i32, f32, S)` is a triple. Enum values are initialised in the same way as enum types are declared, but with values instead of types for the components, e.g., `(4, 5)`. An example:

// foo takes a struct and returns a tuple

fn foo(x: SomeOtherStruct) -> (i32, f32, S) {

(23, 45.82, S { field1: 54, field2: x })

}

Tuples can be used by destructuring using a `let` expression, e.g.,

fn bar(x: (int, int)) {

let (a, b) = x;

println!("x was ({}, {})", a, b);

}

We'll talk more about destructuring next time.

Tuple structs

Tuple structs are named tuples, or alternatively, structs with unnamed fields. They are declared using the `struct` keyword, a list of types in parentheses, and a semicolon. Such a declaration introduces their name as a type. Their fields must be accessed by destructuring (like a tuple), rather than by name. Tuple structs are not very common.

struct IntPoint (int, int);

fn foo(x: IntPoint) {

let IntPoint(a, b) = x; // Note that we need the name of the tuple

// struct to destructure.

println!("x was ({}, {})", a, b);

}

Enums

Enums are types like C++ enums or unions, in that they are types which can take multiple values. The simplest kind of enum is just like a C++ enum:

enum E1 {

Var1,

Var2,

Var3

}

fn foo() {

let x: E1 = Var2;

match x {

Var2 => println!("var2"),

_ => {}

}

}

However, Rust enums are much more powerful than that. Each variant can contain data. Like tuples, these are defined by a list of types. In this case they are more like unions than enums in C++. Rust enums are tagged unions rather untagged (as in C++), that means you can't mistake one variant of an enum for another at runtime. An example:

enum Expr {

Add(int, int),

Or(bool, bool),

Lit(int)

}

fn foo() {

let x = Or(true, false); // x has type Expr

}

Many simple cases of object-oriented polymorphism are better handled in Rust using enums.

To use enums we usually use a match expression. Remember that these are similar to C++ switch statements. I'll go into more depth on these and other ways to destructure data next time. Here's an example:

fn bar(e: Expr) {

match e {

Add(x, y) => println!("An `Add` variant: {} + {}", x, y),

Or(..) => println!("An `Or` variant"),

_ => println!("Something else (in this case, a `Lit`)"),

}

}

Each arm of the match expression matches a variant of `Expr`. All variants must be covered. The last case (`_`) covers all remaining variants, although in the example there is only `Lit`. Any data in a variant can be bound to a variable. In the `Add` arm we are binding the two ints in an `Add` to `x` and `y`. If we don't care about the data, we can use `..` to match any data, as we do for `Or`.

Option

One particularly common enum in Rust is `Option`. This has two variants - `Some` and `None`. `None` has no data and `Some` has a single field with type `T` (`Option` is a generic enum, which we will cover later, but hopefully the general idea is clear from C++). Options are used to indicate a value might be there or might not. Any place you use a null pointer in C++ to indicate a value which is in some way undefined, uninitialised, or false, you should probably use an Option in Rust. Using Option is safer because you must always check it before use; there is no way to do the equivalent of dereferencing a null pointer. They are also more general, you can use them with values as well as pointers. An example:

use std::rc::Rc;

struct Node {

parent: Option>,

value: int

}

fn is_root(node: Node) -> bool {

match node.parent {

Some(_) => false,

None => true

}

}

There are also convenience methods on Option, so you could write the body of `is_root` as `node.is_none()` or `!node.is_some()`.

Inherited mutabilty and Cell/RefCell

Local variables in Rust are immutable by default and can be marked mutable using `mut`. We don't mark fields in structs or enums as mutable, their mutability is inherited. This means that a field in a struct object is mutable or immutable depending on whether the object itself is mutable or immutable. Example:

struct S1 {

field1: int,

field2: S2

}

struct S2 {

field: int

}

fn main() {

let s = S1 { field1: 45, field2: S2 { field: 23 } };

// s is deeply immutable, the following mutations are forbidden

// s.field1 = 46;

// s.field2.field = 24;

let mut s = S1 { field1: 45, field2: S2 { field: 23 } };

// s is mutable, these are OK

s.field1 = 46;

s.field2.field = 24;

}

Inherited mutability in Rust stops at references. This is similar to C++ where you can modify a non-const object via a pointer from a const object. If you want a reference field to be mutable, you have to use `&mut` on the field type:

struct S1 {

f: int

}

struct S2<'a> {

f: &'a mut S1 // mutable reference field

}

struct S3<'a> {

f: &'a S1 // immutable reference field

}

fn main() {

let mut s1 = S1{f:56};

let s2 = S2 { f: &mut s1};

s2.f.f = 45; // legal even though s2 is immutable

// s2.f = &mut s1; // illegal - s2 is not mutable

let s1 = S1{f:56};

let mut s3 = S3 { f: &s1};

s3.f = &s1; // legal - s3 is mutable

// s3.f.f = 45; // illegal - s3.f is immutable

}

(The `'a` parameter on `S2` and `S3` is a lifetime parameter, we'll cover those soon).

Sometimes whilst an object is logically mutable, it has parts which need to be internally mutable. Think of various kinds of caching or a reference count (which would not give true logical immutability since the effect of changing the ref count can be observed via destructors). In C++, you would use the `mutable` keyword to allow such mutation even when the object is const. In Rust we have the Cell and RefCell structs. These allow parts of immutable objects to be mutated. Whilst that is useful, it means you need to be aware that when you see an immutable object in Rust, it is possible that some parts may actually be mutable.

RefCell and Cell let you get around Rust's strict rules on mutation and aliasability. They are safe to use because they ensure that Rust's invariants are respected dynamically, even though the compiler cannot ensure that those invariants hold statically. Cell and RefCell are both single threaded objects.

Use Cell for types which have copy semantics (pretty much just primitive types). Cell has `get` and `set` methods for changing the stored value, and a `new` method to initialise the cell with a value. Cell is a very simple object - it doesn't need to do anything smart since objects with copy semantics can't keep references elsewhere (in Rust) and they can't be shared across threads, so there is not much to go wrong.

Use RefCell for types which have move semantics, that means nearly everything in Rust, struct objects are a common example. RefCell is also created using `new` and has a `set` method. To get the value in a RefCell, you must borrow it using the borrow methods (`borrow`, `borrow_mut`, `try_borrow`, `try_borrow_mut`) these will give you a borrowed reference to the object in the RefCell. These methods follow the same rules as static borrowing - you can only have one mutable borrow, and can't borrow mutably and immutably at the same time. However, rather than a compile error you get a runtime failure. The `try_` variants return an Option - you get `Some(val)` if the value can be borrowed and `None` if it can't. If a value is borrowed, calling `set` will fail too.

Here's an example using a ref-counted pointer to a RefCell (a common use-case):

use std::rc::Rc;

use std::cell::RefCell;

Struct S {

field: int

}

fn foo(x: Rc>) {

{

let s = x.borrow();

println!("the field, twice {} {}", s.f, x.borrow().field);

// let s = x.borrow_mut(); // Error - we've already borrowed the contents of x

}

let s = x.borrow_mut(); // O, the earlier borrows are out of scope

s.f = 45;

// println!("The field {}", x.borrow().field); // Error - can't mut and immut borrow

println!("The field {}", s.f);

}

http://featherweightmusings.blogspot.com/2014/05/rust-for-c-programmers-part-7-data-types.html

|

|

Selena Deckelmann: Things I’ve learned from my personal trainer |

I’ve been seeing a personal trainer for the last couple of years. I injured my back pretty seriously 5-6 years ago, and finally admitted that I had a “real problem” that required doctor and other professional intervention. After x-rays and a couple months more of denial, I started an exercise program focused on weight training.

Previously, my main (and sometimes only) exercise had been running. The back problem I have caused my doctor to recommend that I never run again. At first this was an easy thing to do because running was incredibly painful. Later, I realized that running was not only a fitness thing for me, but also an important part of my mental health. I really needed another way to get in a lot of exercise.

I go to the gym 3 times a week, and I try to walk about 5 miles a day at least 3 additional days a week. Playing Ingress, and bird watching tend to make this pretty easy.

I’m now 7-months pregnant, and I still do both things. I typically don’t get any 10k days in like I did before I was pregnant, but apart from some grueling travel I did in April, I’ve been able to keep it up the entire time.

I’ve threatened to give a talk about this, but rather than wait to assemble all of this until then, I thought it would be nice to just write it down in the event it helps someone else!

My physical activity background:

I did first ballet, then karate and judo as a child. I never played any ball or team sports, and did not learn to swim other than barely keep myself afloat until I was an adult. In high school, I ran cross country for three seasons. Mostly I played the violin, at least 2 hours a day sometimes up to 5 hours a day, which is a physically tiring activity, but not aerobic (usually). I also hiked and backpacked with my family. I was able to run a 7-7:30 minute mile until a couple years after I graduated from college. In general, I thought of myself as a pretty fit person, and ran slower because no coach was yelling at me and no competitors were chasing me as I got older. The last time I seriously “trained” was for a marathon I ended up not running in about 2005. I got myself up to 18 miles and then just cycled about 8 miles a day to and from work for many years.

So, by the time I decided to get a trainer I was not in very good shape.

Here’s the stuff that I learned right away:

- I did not know how to lift things. “Lift with your legs” is what people say. I did not know what this really meant, and was doing some strange combination of using my quads and my lower back to lift heavy things. That’s pretty much exactly what you should not do.

- My shoulders were incredibly weak. Part of that was from typing all day for my work and being tense. Another part was just lack of use.

- My lack of grip strength was causing me a lot pain in my hands and forearms. I’d overuse my wrists and hands every day, and then go back and do it again. I didn’t quite have carpal tunnel, but it was probably coming pretty soon.

- I was very embarrassed by my lack of basic knowledge in the gym. I’m pretty good with jargon, but holy shit, gym language was complicated. And didn’t map to anything I already knew. The names of the exercises were not helpful to me, and I found myself drawing lots of stick figure pictures to try to remember how to do things properly. I didn’t know the names of equipment, I couldn’t remember the difference between a barbell and a dumbbell, I could not for the life of me recall an exercise if I took more than about a week-long break from it.

- Strengthening my back causes a lot of soreness that can easily be confused with bad pain. I was terrified at first that I was going to reinjure myself and was extremely cautious about any kind of back “pain” I experienced. Over time I figured out the difference between muscle soreness and real, “don’t do that” kind of pain. But it took me nearly a year to feel confident that I can (mostly) tell the difference.

- I will never be able to perform a new exercise perfectly the first time. And I really need to be ok with that. As a person who regularly masters new technical skills with little or no help from colleagues or friends, it’s regularly an embarrassing and humbling experience to go to the gym.

- “Testing out” injuries to joints doesn’t help anything. I am able to hyperextend most of my joints, including my wrists. I’d injured my wrists and had to do pushups and things like that from my fists for many months. When I first discovered I was injured, I kept “testing” which likely was just reinjuring myself over and over. It took probably 8 weeks the first time, and maybe 4 weeks the second time to recover from wrist injuries enough that I could do open-handed pushups. Learning to avoid the avoidable reinjuries was extremely helpful.

So what are the kinds of facts that I wish I would have known earlier in my life?

- “Lift with your legs” basically means “lift by squeezing your glutes.” These are usually the largest muscles in your body, and basically they are your butt muscles. As my glutes have gotten stronger, my back pain has entirely gone away. In the last two years, I have had two incidents requiring more than a couple hours worth of recovery time. Previous to weight lifting, I’d needed sometimes 5 days to be able to stand up properly from a couch. While injured, I’d had to roll myself down to the floor and slowly push my entire body up, barely able to stand. Of course, this is specific to the injury I have. However, a lot of people sit in terrible chairs, hunched over computers all day and they could really benefit from learning how to strengthen their glutes.

- Increasing glute strength through barbell lifting from a standing position (squats, deadlifts mostly) improved my posture dramatically. I now get people saying things in passing like “you have great posture”, something that I NEVER heard for the first 35 or so years of my life.

- I need a lot of protein first thing in the morning to feel well through out the day. I eat two eggs every morning, usually also some yogurt and fruit. Cereal does not cut it. Oatmeal can sometimes be ok if combined with a bunch of other things.

- Pain does not necessarily mean you are injured. You might just need to stretch something out.

- Lifting weights when you feel like crap often will make you feel much much better.

- It doesn’t take very long to get really strong. Within three months, I had dramatic improvements in basic stuff like being able to lift boxes of heavy stuff.

- Grip strength improves amazingly as you increase the weight of barbell and dumbell lifts. I rarely feel pain in my hands and forearms anymore, and I have a pretty crushing grip.

- I work best with minimal verbal queues, a lot of physical queues (poking muscles, mostly) and being able to see someone try to do something before I try it. As an obsessive reader, it never occurred to me that in the physical world, I would mostly require this kind of instruction. The bigger realization was probably that demonstration was extremely effective for me, more effective than: telling me to do something, being observed doing it, and getting feedback. Part of that is my a lack of gym vocabulary, but I also really need to see each exercise before I am able to mimic proper form (and ask a ton of questions).

- Foam rollers are amazing. I foam roll a bunch of things before I work out and sometimes at home. Currently I’m focused on my t-bands and quads, and sometimes I roll out parts of my arms. My elbows started pinging when I started increasing the weights I used for curls. After I was able to do curls with something like a 55-lb barbell, the tightness and pain went away.

Anyway, so that’s the stuff that I know now. I have said to myself that I wished I would have gotten into weight lifting earlier in life. But, to be honest, I don’t know that I would have been that into it without an enthusiastic teacher and a group of people encouraging me to do it. Now, I feel pretty good about independent physical activity, and I can afford to pay someone to help me stay on track.

I’m also extremely fortunate to be able to continue to lift while I’m quite pregnant. I’ve dramatically reduced the weight I lift for squats and deadlifts, after my doctor freaked out and told me I really shouldn’t be lifting so much.

If I ever get pregnant again, I might try to do some research and figure out what my real limits should be on this. Some current wisdom on this says that you should only do lifts that work your abdominals and lower body at about 60% of your max while pregnant. I have no idea where that comes from, and sincerely wish there was more than just hearsay and fear related to lifting while pregnant. There’s of course a lot of documentation of how bad injuries to a woman’s pelvic floor can be while pregnant, so there’s real science backing up the urge for caution. But if you’re otherwise very strong, I’m not sure that the freakouts about specific weight are warranted (if you’re healthy and not trying to achieve personal bests :D) any more than freaking out about women running until they give birth is called for.

I’ve been also thinking about the ways in which gym discipline has affected how I think about teaching and programming, but that’s a topic for another post!

|

|

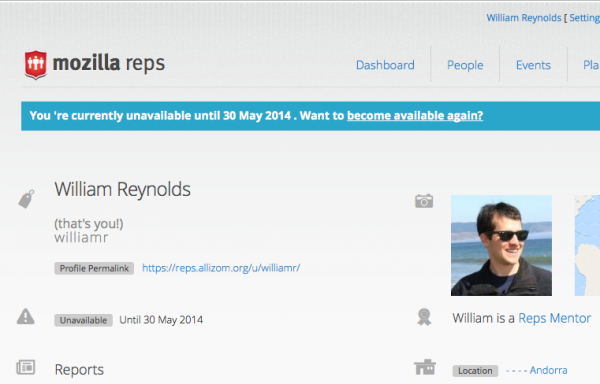

Mozilla Reps Community: Reps, let others know when you are unavailable |

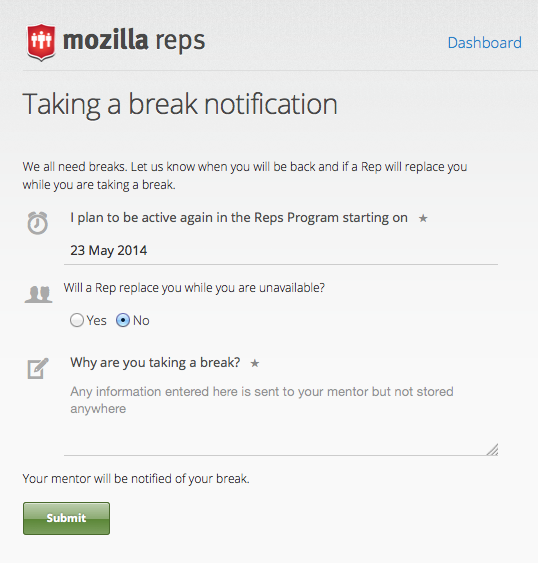

As Reps who are doing many things, we sometimes need a break from our activities. To make it easy to communicate those breaks, you can quickly complete a form to update your profile and let your mentor know when you will be unavailable for a few weeks or more.

When you want to start your unavailability period, submit a break notification. You can navigate to the form from the Settings page when you are signed in on reps.mozilla.org.

Once you submit a break notification

- Your mentor receives an email notification that you will be unavailable

- Your profile is updated to show you are unavailable until the date you provided

- The Dashboard page for your mentor and other Mozillians shows you as unavailable

- You and your mentor will receive an email reminder the day before you specified you would be available again

At the end of your unavailability period, you will need to indicate that you are ready to resume your activities. The reminder email will prompt you to visit the Settings page to confirm you are ready to resume your Reps activities. If you need more time, no action is needed. Just return to the Settings page when you are ready and click ‘Resume your activities’.

This feature is a result of the planning that happened at the Reps Leadership Meetup in February. Thanks to everyone who helped from the conception to coding to release!

This feature is a result of the planning that happened at the Reps Leadership Meetup in February. Thanks to everyone who helped from the conception to coding to release!

If you find any issues or odd behavior while using this new functionality, file a bug and we’ll take a look at it.

https://blog.mozilla.org/mozillareps/2014/05/23/reps-let-others-know-when-you-are-unavailable/

|

|

Ben Hearsum: This week in Mozilla RelEng – May 23rd, 2014 |

Major highlights:

- Hal presided over the first batch of machine moves from SCL1 to SCL3. The move was nearly seamless, and only resulted in a small reduction of build capacity.

- Jordan got a patch ready that allows disabling of slaves through SlaveAPI. When landed, this will allow sheriffs to deal with problematic slaves more quickly and easily (and possibly automatically).

- Armen got us running Gaia OOP and reftest sanity tests on b2g desktop builds.

- Aki changed how we chunk some test jobs. This change has no functional impact, but improves performance on our Buildbot masters in a few ways, which have been very problematic lately.

Completed work (resolution is ‘FIXED’):

- General Automation

- Create 4 more linux64 tests masters

- stop running trace-malloc tests

- Add ‘hsb’ to the Firefox build

- eng+noneng nightlies upload MARs (and maybe other files) that stomp on each other

- Gaia-Try is seeing 404s while downloading test zip files.

- kill inari, leo builds

- disable gating on mac builder for servo

- Do debug B2G desktop builds

- Do we need desktop Firefox nightlies on the b2g26 (v1.2) branch anymore?

- Increase filesize limit for mac signing

- b2g balrog submission should point at dated dirs, not latest-*

- servo linux builder needs /tools/gcc-4.7.3-0moz1/bin in its PATH

- Add a MOZ_AUTOMATION environment to all builds

- Run Gaia unit oop and reftest sanity oop for b2g desktop on trunk

- Rename B2G Desktop “R” runs to something else, since they’re only reftest-sanity (not a full reftest run)

- Manage repo checkout directly from b2g_build.py

- change how we chunk in desktop_unittest.py

- rust needs newer xcode to build on servo build slaves

- make device build bits more identifiable on public ftp

- [mozharness] move gaia_test.py from “mozharness/base” to “mozharness/mozilla” directory

- Loan Requests

- Loan talos-linux64-ix-001 to dminor, ctalbert & gbrown

- Please loan tst-linux64- instance to dminor

- Slave loan request for a VS2013 build machine

- please loan jmaher an entire IX machine (4) of linux64 talos testers

- Other

- Deprecate tinderbox-builds/old directories for desktop & mobile

- Please grant :glandium ssh access to releng infra

- Switch in house try builds to S3 for sccache

- Releases

- Repos and Hooks

- The prevent_webidl_changes hook must ignore backouts

- Make the prevent_webidl_changes hook more relaxed about backout messages

- Create repositories for Nexus 4/5 prebuilt kernels for B2G

- Add a repository hook to enforce new review requirements for changing .webidl files

- New git repositories to mirror

- “sr=[DOM peer]” should be allowed as well as “r=[DOM peer]” for webidl changes

- Tools

- Add mochitest-dt to Trychooser

- Blobber fails to upload .extra files due to “File type not allowed on server!”

- TryChooser is showing “N/A” for all queues

- Allow |-p linux64-valgrind| to be specified in trychooser

- Make “mochitest-browser” a synonym for “mochitest-bc” in try syntax

- trychooser’s -e and -f emails about finished jobs only work for MoCo employees who use their MoCo address

- Blobber is spamming logs with requests.exceptions.SSLError: [Errno 1] _ssl.c:504: error:14090086:SSL routines:SSL3_GET_SERVER_CERTIFICATE:certificate verify failed

In progress work (unresolved and not assigned to nobody):

- Buildduty

- General Automation

- include device in fota mar filenames

- Make blobber uploads discoverable

- [Dolphin] Create Dolphin builds for 1.4

- Remove the need to create Puppet changes for BuildSlaves*.py.erb, production_config.py and production-master.json

- Update Gu job to build Gaia with DESKTOP_SHIMS=0

- Start doing mulet builds

- Run mozbase unit tests from test package

- Don’t require puppet or DNS to launch new instances

- Provide B2G Emulator builds for Darwin x86

- Figure out the correct path setup and mozconfigs for automation-driven MSVC2013 builds

- Put ccache on SSDs

- switch b2g builds to use aus4.mozilla.org as their update server

- Use properties to handle chunking

- Schedule mochitest-oop against linux64_gecko on trunk branches

- create in-tree CA pinning preload list

- Add support for webapprt-test-chrome test jobs & enable them per push on Cedar

- Stop running cppunit and jittest on OS X 10.8 on all branches

- [Dolphin] Need a way to build Dolphin builds for 1.4

- Enable tests for debug B2G desktop builds as soon as they are green on Cedar

- Blobber upload fails for compressed files on Windows.

- Clean up b2g names in our configs

- Loan Requests

- Connect a linux and mac slave to dev-master1:8950 for pkewisch

- Need a bld-lion-r5 to test build times with SSD

- loan linux64 ec2 slave to jmaher

- Loan :sfink a linux64 b-linux64-hp-0024

- Other

- Platform Support

- Deploy hg.m.o/build/buildbot production-0.8 to buildslaves to pick up bug 961075

- scl1 Move Train A releng config Work

- FHR on Android should not be attempting to connect to the internet in automation jobs

- Windows slaves often get permission denied errors while rm’ing files

- Cleanup temporary files on boot

- Release Automation

- release l10n repacks failed due to failed “rm”

- release automation can’t update balrog blobs during the update step

- cache MAR + installer downloads in update verify

- Releases

- Trim rsync modules (May 2014 OMG we still have to do this edition)

- do a staging release of Firefox and Fennec 31.0b1

- Releases: Custom Builds

- Repos and Hooks

- Tools

- [Tracking bug] – Assisted/Auto Landing from Bugzilla to tip of $branch

- vcs-sync needs to populate mapper db once it’s live

- implement “disable” action in slaveapi

- Initial (v0) Deploy of Slaveloan tool

- Trychooser should not select opt/debug by default and leave the user to choose

- Transplant tool (Hg to Hg)

- db-based mapper on web cluster

- Create a Comprehensive Slave Loan tool

- kill b2g18 + b2g18_v1_1_0_hd

http://hearsum.ca/blog/this-week-in-mozilla-releng-may-23rd-2014/

|

|

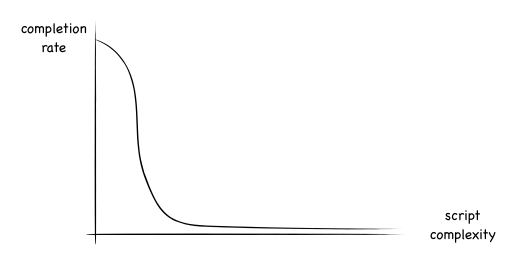

Andrew Halberstadt: When would you use a Python mixin? |

|

|

Brian Warner: The new Sync protocol |

(This wraps up a two-part series on recent changes in Firefox Sync, based on my presentation at RealWorldCrypto 2014. Part 1 was about problems we observed in the old Sync system. Part 2 is about the protocol which replaced it.)

Last time I described the user difficulties we observed with the pairing-based Sync we shipped in Firefox 4.0. In late April, we released Firefox 29, with a new password-based Sync setup process. In this post, I want to describe the protocol we use in the new system, and their security properties.

(For the cryptographic details, you can jump directly to the full technical definition of the protocol, which we’ve nicknamed “onepw”, since there is now just “one password” to protect both account access and your encrypted data)

Design Constraints

To recap the last post, the biggest frustration we saw with the old Sync setup process was that it didn’t “work like other systems”: users *thought* their email and password would be sufficient to get their data back, but in fact you need access to a device that was already attached to your account. This made it unsuitable for people with a single device, and made it mostly impossible to recover from the all-too-common case of losing your only browser. It also confused people who thought email+password was the standard way to set up a new browser.

In addition, we’ve been building a new system called Firefox Accounts, aka “FxA”, which will be used to manage access to Mozilla’s new server-based features like the application marketplace and FirefoxOS-specific services.

So our design constraints for the new Sync setup process were:

- must work well with Firefox Accounts

- must sign in with traditional email and password: no pre-connected device necessary

- all Sync data must be end-to-end encrypted, just like before, using a key that is only available to you and your personal devices

Firefox Accounts: Login + Keys

To meet these constraints, we designed Firefox Accounts to both support the needs of basic login-only applications, *and* provide the secret keys necessary to safely encrypt your Sync data, while using traditional credentials (email+password) instead of pairing.

For login, FxA uses BrowserID-like certificates to affirm your control over a GUID-based account identifier. These are used to create a “Backed Identity Assertion”, which can be presented (as a bearer token) to a server. The Sync servers require one of these assertions before granting read/write access to the encrypted data they store.

Each account also manages a few encryption keys, one of which is used to encrypt your Sync data.

What Does It Look Like?

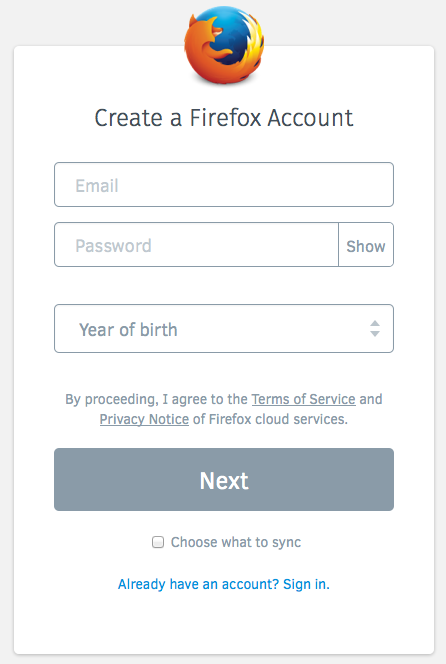

In Firefox 29, when you set up Sync for the first time, you’ll see a box that asks for an email address and a (new) password:

You fill that out, hit the button, then the server sends you a confirmation email. Click on the link in the email, and your browser automatically creates an encryption key and starts uploading ciphertext.

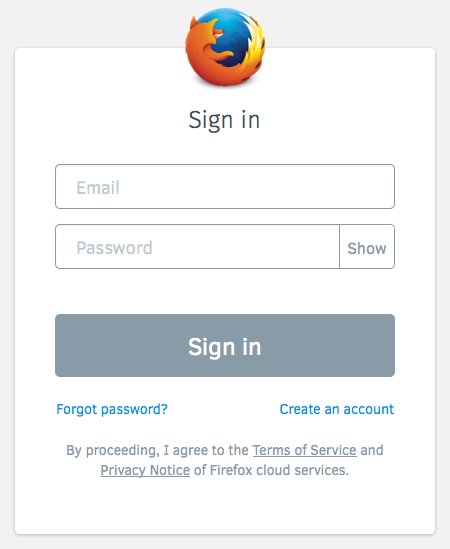

Connecting a second device to your account is as simple as signing in with the same email and password:

The Gory Details

This section describes how the new Firefox Accounts login protocol protects your password, the Sync encryption key, and your data. For full documentation, take a look at the key-wrapping protocol specs and the server implementation.

This post only describes how the master “Sync Key” is managed. To learn about how the individual records are encrypted (which hasn’t changed), take a look at the storage format docs.

Encryption Keys

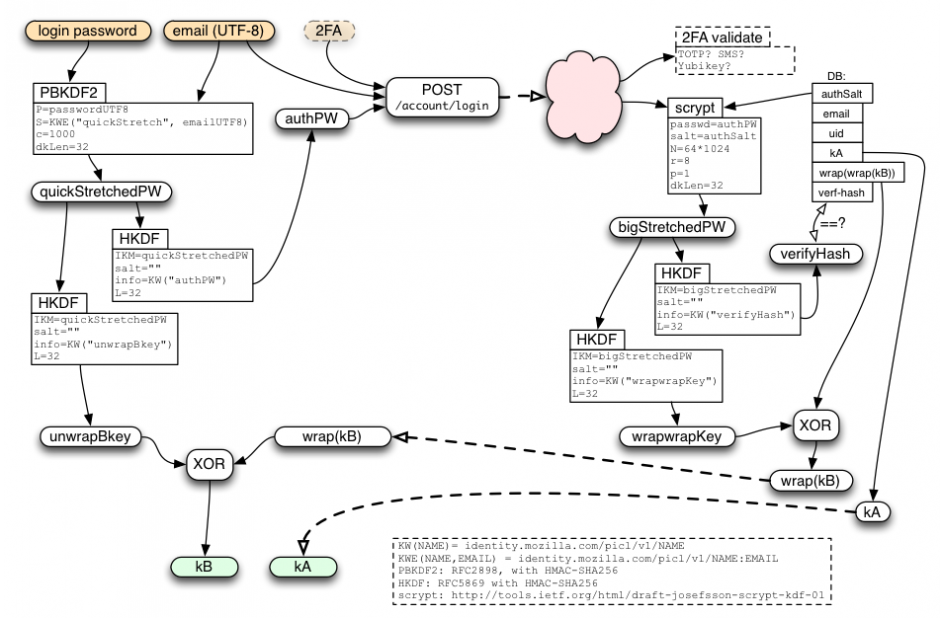

Each account has two full-strength 256-bit encryption keys, named “kA” and “kB”. These are used to protect two distinct categories of data: recoverable “class-A”, and password-protected “class-B”. Nothing uses class-A yet, so I’ll put that off until a future article.

Sync data falls into class B, and uses the kB key, which is protected by your account password. In technical terms, the FxA server holds a “wrapped copy” of kB, which requires your password to unwrap. Nobody knows your password but you and your browser, not even Mozilla’s servers. Not even for a moment during login. The same is true for kB.

To access any data encrypted under kB, you must remember your password. This means that anyone who doesn’t know the password can’t see your data.

If you forget the password, you’ll have to reset the account and create a new kB, which will erase both the old kB and the data it was protecting. This is a necessary consequence of properly protecting kB with the password: if there were any other way for you to recover the data without the password, then a bad guy could do the same thing.

kB is a “root” key: it isn’t used directly. Instead, we derive a distinct subkey for each application (like Sync) that wants to encrypt class-B data. That way, applications are prevented from decrypting data that wasn’t meant for them. Sync is the only application we have so far, but we may add more in the future.

Keeping your secrets safe

To make sure your Sync data is really end-to-end encrypted, we must prevent anyone else from figuring out your password, otherwise they could learn kB and decrypt your data. “End-to-end” means we even have to exclude our own server from learning your password. The server is usually on your side, but we’d like to maintain security even if it gets compromised. A compromised server is the most powerful kind of attack we can handle. So if we can keep your password away from our server, we can keep it away from any attackers too.

We use multiple layers of security to protect your password. To start with, the server is never told your raw password: you must prove that you know the password, but that’s not the same thing as revealing it. The client sends a hashed form of the password instead.

This hashed form uses “key-stretching” on the password before sending anything to the server, to make it hard for a compromised server to even attempt to guess your password. This stretching is pretty lightweight (1000 rounds of PBKDF2-SHA256), but only needs to protect against the attacker who gets to see the stretched password in-flight (either because they compromised the server, or they’ve somehow broken TLS).

Finally, the data stored on the server is stretched even further, to make a static compromise of the server’s database less useful to an attacker. This uses the “scrypt” algorithm, with parameters of (N=64k, r=8, p=1). At these settings, scrypt takes 64MB of memory, and about 250ms of CPU time.

This complicated diagram shows how the password is processed before sending anything to the server, and then used to unwrap the server’s response when it gets back:

Security Properties

Sync retains the same end-to-end security that it had before. The difference is that this security is now derived from your password, rather than pairing. So your security depends upon having a good password: if someone can guess it, they’ll be able to connect their own browser to your account and then see all the Sync data you’ve stored there.

On the flip side, by using passwords, you can connect a new browser to your account without having an existing device nearby to pair with, and you can even access your Sync data after losing your last device, neither of which was possible with the old pairing process.

How Hard Is It For Someone To Guess My Password?

The main factor is how well you generate the password. The best passwords are randomly generated by your own computer. The process you use (or rather the process that an attacker thinks you used) determines a set of possible passwords, which an attacker would need to try, one at a time, until they find the right one. Hopefully this set is very very large: billions or trillions at least.

The difficulty of testing each guess depends upon what the attacker has managed to steal. Regular attackers out on the internet are limited to “online guessing”, which means they just try to sign in with your email address and their guessed password, as if they were a regular user, and see whether it works or not. This is rate-limited by the server, so they’ll only get dozens or maybe hundreds of guesses before the server cuts them off.

An attacker who gets a copy of the server’s database (perhaps through an SQL injection attack, the sort you read about every month or two) have to spend about a second of computer time for each guess, which adds up when they must try a lot of them. The most serious kind of attack, where the bad guy gets full control of the server and can eavesdrop on login requests, yields an easier offline guessing attack (PBKDF rather than scrypt) which could be made cheaper with specialized hardware.

The security of old Sync didn’t depend upon a password, because the pairing protocol it used meant there were no passwords.

Can I Change My Password?

Of course! From the Preferences menu, choose the Sync tab, and hit the “Manage” link. That will take you to the “Manage account” page, which has a “Change password” link, where you can just type your old and new passwords. Changing your password will automatically disconnect any other browsers that were syncing with your account. You’ll need to re-sign-in on those browsers before they’ll be able to resume syncing. All of your server-side data will be retained.

What Happens If I Forget My Password?

If you can’t remember your password, you’ll have to reset your account (by using the “Forgot password?” link from the login screen). This will send you a password-reset confirmation email with a link in it. Click on the link, and you’ll be taken to a page where you can set up a new password. As with changing your password, this will disconnect all browsers from your account, so once you’ve finished the reset process, you’ll need to sign back into each browser with your new password.

Resetting the account will necessarily erase any data stored on the server. To be precise, the old data is irretrievable (it was encrypted by a key that was wrapped by your now-forgotten password; and since you can’t recover that key, you can’t recover the data either), and the Sync storage server will erase the old data when it learns that you’re using a new encryption key.

If you reset your account from a browser that was already syncing and up-to-date, then after you reconnect, your browser will simply repopulate the server with your bookmarks/etc, and nothing will be lost. It’s also fine to reset your account from one (empty) browser, then reconnect a second (full) browser: your data will be merged, and everything will eventually be available on both devices.

The one case where you can’t recover your old data is if you lose or break your only device and also forget your password. In this case, when you reset your account from a new (empty) browser, then your old Sync data is lost, and you’ll have to start again from a blank slate. You may want to write down your password in a safe place at home to avoid this, sort of like leaving a spare housekey with a trusted neighbor in case you lose your own.

What If I’m Already Running Sync?

If you’ve been using Sync for a while now, you probably set up Sync with the pairing scheme from Firefox 28 or earlier. Never fear! Your browsers will continue to sync with each other even after you upgrade some or all of them to FF29.

If you’re still running FF28, the FF24 ESR (Extended Support Release), or another pre-FF29 browser, you can still use the pairing flow to connect additional old browsers. We’ll support this flow until at least the end of the ESR maintenance period (14-Oct-2014), maybe a bit longer, but eventually we’ll shut down the servers necessary to support the old pairing flow, and pairing will stop working. We hope to have a new pairing system in place by then: see below.

Likewise, after most users have migrated to New Sync, and everyone has been given fair notice to upgrade, the old-style Sync storage servers will eventually be shut down. But for now, existing Sync users don’t need to make any changes.

However, pairing-based Old Sync and password-based FxA-powered New Sync don’t mix: if you used pairing to connect two FF28 browsers together, you won’t be able to connect a third FF29 browser to them, even if you upgrade them all to FF29. You’ll need to move everything to FxA to connect all three:

- upgrade everything to FF29

- disconnect both old browsers from Sync

- create a Firefox Account

- sign all three browsers into your new account with the same email and password

This process won’t lose any data: everything will be merged together in the new account.

Future Directions

We’re working on adding two-factor authentication (“2FA”) to Firefox Accounts. If you enable this, you’ll need to type in an additional code (generally provided by your mobile phone) when you log in. The two main options we’re investigating are TOTP one-time passwords (e.g. the Google Authenticator app), and SMS codes.

We are also looking for ways to re-introduce pairing as an optional feature, after the main login. This might use an additional key “kC”, which is only transferred via pairing. Once enabled, to set up Sync on a new device, you would need grant it permission from an old device that is already connected. We think we can make the pairing experience better than it was before, because we’ll have more information to work with (you’ve already logged in, so we know which other devices might be available for pairing).

Conclusion

The new Sync sign-up process is now live and adding thousands of users every day. The password-based login makes it possible to use Sync with just a single device: as long as you can remember the password, you can get back to your Sync data. It still encrypts your data end-to-end like before, but it’s important to generate a good random password to protect your data completely.

To set up Sync, just upgrade to Firefox 29, and follow the “Get started with Sync” prompts on the welcome screen or the Tools menu.

Happy Syncing!

(Thanks to Karl Thiessen, Ryan Kelly, Chris Karlof, and Daniel Kahn Gillmor for their invaluable feedback. Cross-posted to my home blog.)

https://blog.mozilla.org/warner/2014/05/23/the-new-sync-protocol/

|

|

Tarek Ziad'e: Data decentralization & Mozilla |

The fine folks at the Mozilla Paris office took the opportunity of our presence (Alexis/Remy/myself) to organize a "Meet the Cloud Services French Team" event yesterday night. Among all the discussions we had, one topic came back several times during the evening.

How do we let people using our services, host their data anywhere they want

I built the first Python version of the Firefox Sync server, so I had an answer already - you can tweak your browser configuration to point to your own server.

But self-hosting your Sync server requires quite some knowledge. I provided a Makefile to build the server back in the days, but the amount of work to set everything up was quite important.

And it got bigger with the new Sync version, because we've added dependencies to other services for authentication purposes. Our overall architecture is getting better but self-hosting Firefox Sync is getting harder.

Alexis is quite excited about trying to improve this situation, and suggested building debian packages to make the process easy as in "apt-get install firefox-sync".

There were also discussion around remoteStorage and the more I look at it, the more I feel like a product like Firefox Sync could rely on a remoteStorage server. That would make self-hosting straightforward.

The only thing that's unclear to me yet is if remoteStorage is heavily tied to OAuth or if we can plug our own authentication process. (e.g. Firefox Account tokens)

Another problem I see: it's easy to build client-side applications that directly interacts with a remoteStorage, but sometimes you do have to provide server-side APIs. In that case, I am wondering how convenient it would be for a web service to interact with a 3rd party remoteStorage server on behalf of a user. If both parts are different entities, it's a recipe for technical nightmares.

It feels in any case that those topics are going to be very important for the web in the upcoming months, and that Mozilla needs to play an important role there.

Looking forward to see what we'll do in this area.

Tristan Nitot, who came by during the meeting, has sparkled this discussion and is planning to organize recurrent meetings on the topic at the Paris community space - helped by Claire and Axel. They are also zillions of other cool stuff happening at the Paris space this summer. Like, several meetings per week. I'll try to update this blog post whenever I find a good link to the events list.

http://blog.ziade.org/2014/05/23/data-decentralization-amp-mozilla/

|

|

Laura Hilliger: This Week in Webmaker Training: Building |

via azharfeder in Webmaker Training[/caption]

Participating in this kind of open, online learning experience is like learning to ride a bicycle. It might be difficult to get used to how this thing works and to stay on it, but once you’ve learned to ride, there are endless possibilities of places to go and people to ride with.

via azharfeder in Webmaker Training[/caption]

Participating in this kind of open, online learning experience is like learning to ride a bicycle. It might be difficult to get used to how this thing works and to stay on it, but once you’ve learned to ride, there are endless possibilities of places to go and people to ride with.

We are making interesting things:

- Mick Fuzz took all of the Webmaker Training materials and compiled them into an eBook, and he’s clearly documenting his steps so that anyone can make eBooks from Web resources.

- Jlweichler made an epic Processing Teaching Kit

- Julia shared an activity that will get you speed dating Web Mechanics

- Much to Valebb

http://www.zythepsary.com/education/this-week-in-webmaker-training-building/

|

|

Sylvestre Ledru: Changes Firefox 30 beta5 to beta6 |

- 30 changesets

- 77 files changed

- 788 insertions

- 643 deletions

| Extension | Occurrences |

| cpp | 39 |

| h | 15 |

| js | 8 |

| css | 4 |

| mm | 2 |

| xml | 1 |

| webidl | 1 |

| mozbuild | 1 |

| m4 | 1 |

| jsm | 1 |

| java | 1 |

| ini | 1 |

| html | 1 |

| cc | 1 |

| Module | Occurrences |

| js | 22 |

| content | 13 |

| browser | 9 |

| editor | 6 |

| dom | 6 |

| services | 4 |

| widget | 3 |

| layout | 3 |

| gfx | 3 |

| embedding | 3 |

| build | 2 |

| testing | 1 |