Добавить любой RSS - источник (включая журнал LiveJournal) в свою ленту друзей вы можете на странице синдикации.

Исходная информация - http://planet.mozilla.org/.

Данный дневник сформирован из открытого RSS-источника по адресу http://planet.mozilla.org/rss20.xml, и дополняется в соответствии с дополнением данного источника. Он может не соответствовать содержимому оригинальной страницы. Трансляция создана автоматически по запросу читателей этой RSS ленты.

По всем вопросам о работе данного сервиса обращаться со страницы контактной информации.

[Обновить трансляцию]

Mozilla Addons Blog: Extensions in Firefox 72 |

After the holiday break we are back with a slightly belated update on extensions in Firefox 72. Firefox releases are changing to a four week cycle, so you may notice these posts getting a bit shorter. Nevertheless, I am excited about the changes that have made it into Firefox 72.

Welcome to the (network) party

Firefox determines if a network request is considered third party and will now expose this information in the webRequest listeners, as well as the proxy onRequest listener. You will see a new thirdParty property. This information can be used by content blockers as an additional factor to determine if a request needs to be blocked.

Doubling down on security

On the road to Manifest v3, we also recently announced the possibility to test our new content security policy for content scripts. The linked blog post will fill you in on all the information you need to determine if this change will affect you.

More click metadata for browser- and pageActions

If your add-on has a browserAction or pageAction button, you can now provide additional ways for users to interact with them. We’ve added metadata information to the onClicked listener, specifically the keyboard modifier that was active and a way to differentiate between a left click or a middle click. When making use of these features in your add-on, keep in mind that not all users are accustomed to using keyboard modifiers or different mouse buttons when clicking on icons. You may need to guide your users through the new feature, or consider it a power-user feature.

Changing storage.local using the developer tools

In Firefox 70 we reported that the storage inspector will be able to show keys from browser.storage.local. Initially the data was read-only, but since Firefox 72 we also have limited write support. We hope this will allow you to better debug your add-ons.

Miscellaneous

- The captivePortal API now provides access to the canonicalURL property. This URL is requested to detect the captive portal state and defaults to

http://detectportal.firefox.com/success.txt - The browserSettings API now supports the onChange listener, allowing you to react accordingly if browser features have changed.

- Extension files with the .mjs extension, commonly used with ES6 modules, will now correctly load. You may come across this when using script tags, for example.

A shout out goes to contributors M'elanie Chauvel, Trishul Goel, Myeongjun Go, Graham McKnight and Tom Schuster for fixing bugs in this version of Firefox. Also we’ve received a patch from James Jahns from the MSU Capstone project. I would also like to thank the numerous staff members from different corners of Mozilla who have helped to make extensions in Firefox 72 a success. Kudos to all of you!

The post Extensions in Firefox 72 appeared first on Mozilla Add-ons Blog.

https://blog.mozilla.org/addons/2020/01/22/extensions-in-firefox-72/

|

|

The Firefox Frontier: Firefox Extension Spotlight: Privacy Badger |

People can’t be expected to understand all of the technically complex ways their online behavior is tracked by hidden entities. As you casually surf the web, there are countless techniques … Read more

The post Firefox Extension Spotlight: Privacy Badger appeared first on The Firefox Frontier.

https://blog.mozilla.org/firefox/firefox-extension-privacy-badger/

|

|

Mozilla Open Policy & Advocacy Blog: What could an “Open” ID system look like?: Recommendations and Guardrails for National Biometric ID Projects |

Digital ID systems are increasingly the battlefield where the fight for privacy, security, competition, and social inclusion is playing out. In our ever more connected world, some form of identity is almost always mediating our interactions online and offline. From the corporate giants that dominate our online lives using services like Apple ID and Facebook and Google’s login systems to government IDs which are increasingly required to vote, get access to welfare benefits, loans, pay taxes, get on transportation or access medical care.

Part of the push to adopt digital ID comes from the international development community who argue that this is necessary in order to expand access to legal ID. The UN Sustainable Development Goals (SDGs) call for “providing legal identity for all, including birth registration” by 2030. Possessing legal identity is increasingly a precondition to accessing basic services and entitlements from both state and private services. For the most marginalised communities, using digital ID systems to access essential services and entitlements from both state and private services are often one of their first interactions with digital technologies. Without these commonly recognized forms of official identification, individuals are at risk of exclusion and denial of services. However, the conflation of digital identity as the same as (or an extension of) “legal identity”, especially by the international development community, has led to an often uncritical embrace of digital ID projects.

In this white paper, we survey the landscape around government digital ID projects and biometric systems in particular. We recommend several policy prescriptions and guardrails for these systems, drawing heavily from our experiences in India and Kenya.

In designing, implementing, and operating digital ID systems, governments must make a series of technical and policy choices. It is these choices that largely determine if an ID system will be empowering or exploitative and exclusionary. While several organizations have published principles around digital identity, too often they don’t act as a meaningful constraint on the relentless push to expand digital identity around the world. In this paper, we propose that openness provides a useful framework to guide and critique these choices and to ensure that identity systems put people first. Specifically, we examine and make recommendations around five elements of openness: multiplicity of choices, decentralization, accountability, inclusion, and participation.

- Openness as in multiplicity of choices: There should be a multiplicity of choices with which to identify aspects of one’s identity, rather than the imposition of a single and rigid ID system across purposes. The consequences of insisting on a single ID can be dire. As the experiences in India and Peru demonstrate, not having a particular ID or failure of authentication via that ID can lead to denial of essential services or welfare for the most vulnerable.

- Openness as in decentralisation: Centralisation of sensitive biometric data presents a single point of failure for malicious attacks. Centralisation of authentication records can also amplify the surveillance capability of those entities that have visibility into the records. Digital IDs should, therefore, be designed to prevent their use as a tool to amplify government and private surveillance When national IDs are mandatory for accessing a range of services; the resulting authentication record can be a powerful tool to profile and track individuals.

- Openness as in accountability: Legal and technical accountability mechanisms must bind national ID projects. Data protection laws should be in force and with a strong regulator in place before the rollout of any national biometric ID project. National ID systems should also be technically auditable by independent actors to ensure trust and security.

- Openness as in inclusion: Governments must place equal emphasis on ensuring individuals are not denied essential services simply because they lack that particular ID or because the system didn’t work, as well as ensuring individuals have the ability to opt-out of certain uses of their ID. This is particularly vital for those marginalised in society who might feel the most at risk of profiling and will value the ability to restrict the sharing of information across contexts.

- Openness as in participation: Governments must conduct wide-ranging consultation on the technical, legal, and policy choices involved in the ID systems right from the design stage of the project. Consultation with external experts and affected communities will allow for critical debate over which models are appropriate if any. This should include transparency in vendor procurement, given the sensitivity of personal data involved.

Read the white paper here: Mozilla Digital ID White Paper

The post What could an “Open” ID system look like?: Recommendations and Guardrails for National Biometric ID Projects appeared first on Open Policy & Advocacy.

|

|

Hacks.Mozilla.Org: The Mozilla Developer Roadshow: Asia Tour Retrospective and 2020 Plans |

It’s a wrap!

November 2019 was a busy month for the Mozilla Developer Roadshow, with stops in five Asian cities —Tokyo, Seoul, Taipei, Singapore, and Bangkok. Today, we’re releasing a playlist of the talks presented in Asia.

We are extremely pleased to include subtitles for all these talks in languages spoken in the countries on this tour: Japanese, Korean, Chinese, Thai, as well as English. One talk, Hui Jing Chen’s “Making CSS from Good to Great: The Power of Subgrid”, was delivered in Singlish (a Singaporean creole) at the event in Singapore!

In addition, because our audiences included non-native English speakers, presenters took care to include local language vocabulary in their talks, wherever applicable, and to speak slowly and clearly. We hope to continue to provide multilingual support for our video content in the future, to increase access for all developers worldwide.

Mozillians Ali Spivak, Hui Jing Chen, Kathy Giori, and Philip Lamb presented at all five stops on the tour.

Additional speakers Karl Dubost, Brian Birtles, and Daisuke Akatsuka joined us for the sessions in Tokyo and Seoul.

Dev Roadshow Asia talk videos (all stops):

Ali Spivak – Introduction: What’s New at Mozilla

Hui Jing Chen – Making CSS from Good to Great: The Power of Subgrid

Philip Lamb – Developing for Mixed Reality on the Open Web Platform

Kathy Giori – Mozilla WebThings: Manage Your Own Private Smart Home Using FOSS and Web Standards

Tokyo and Seoul:

Karl Dubost and Daisuke Akatsuka – Best Viewed With… Let’s Talk about WebCompatibility

Brian Birtles – 10 things I hate about Web Animation (and how to fix them)

The Dev Roadshow took Center Stage (literally!) at Start Up Festival, one of the largest entrepreneurship events in Taiwan. Mozilla Taiwan leaders Stan Leong, VP for Emerging Markets, and Max Liu, Head of Engineering for Firefox Lite joined us to share their perspectives on why Mozilla and Firefox matter for developers in Asia. Our video playlist includes an additional interview with Stan Leong at Mozilla’s Taipei office.

Taiwan videos:

Interview with Stan Leong

Stan Leong – The state of developers in the Asia Pacific region, and Mozilla’s outreach and impact within this region

Max Liu – Learn more about Firefox Lite!

Venetia Tay and Sri Subramanian joined us in Singapore, at the Grab offices high above Marina One Towers.

Singapore videos:

Venetia Tay – Designing for User-centered Privacy

Sriraghavan Subramanian – Percentage Rollout for Single Page web applications

Hui Jing Chen – Making CSS from Good to Great: The Power of Subgrid (Singlish version)

In Asia, we kept the model of past Dev Roadshows. Again, our goal was to meet with local developer communities and deliver free, high-quality, relevant technical talks on topics relevant to Firefox, Mozilla and the web.

At every destination, developers shared unique perspectives on their needs. We learned alot. In some communities, concern for security and privacy is not a top priority. In other locations, developers have extremely limited influence and autonomy in selecting tools or frameworks to use in their work. We realized that sometimes the “best” solutions are out of reach due to factors beyond our control.

Nevertheless, all the developers we spoke to, across all the locales we visited, expressed a strong desire to support diversity in their communities. Everyone we met championed the value of inclusion: attracting more people with diverse backgrounds and growing community, positively.

The Mozilla DevRel team is planning what’s ahead for our Developer Roadshow program in 2020. One of our goals is to engage even more deeply with local developer community leaders and speakers in the year ahead. We’d like to empower dev community leaders and speakers to organize and produce Roadshow-style events in new locations. We’re putting together a program and application process (open February 2020 – watch here and on our @MozHacks twitter account for update), and will share more information soon!

The post The Mozilla Developer Roadshow: Asia Tour Retrospective and 2020 Plans appeared first on Mozilla Hacks - the Web developer blog.

|

|

Mozilla Security Blog: CRLite: Speeding Up Secure Browsing |

CRLite pushes bulk certificate revocation information to Firefox users, reducing the need to actively query such information one by one. Additionally this new technology eliminates the privacy leak that individual queries can bring, and does so for the whole Web, not just special parts of it. The first two posts in this series about the newly-added CRLite technology provide background: Introducing CRLite: All of the Web PKI’s revocations, compressed and The End-to-End Design of CRLite.

Since mid-December, our pre-release Firefox Nightly users have been evaluating our CRLite system while performing normal web browsing. Gathering information through Firefox Telemetry has allowed us to verify the effectiveness of CRLite.

The questions we particularly wanted to ask about Firefox when using CRLite are:

- What were the results of checking the CRLite filter?

- Did it find the certificate was too new for the installed CRLite filter;

- Was the certificate valid, revoked, or not included;

- Was the CRLite filter unavailable?

- How quickly did the CRLite filter check return compared to actively querying status using the Online Certificate Status Protocol (OCSP)?

How Well Does CRLite Work?

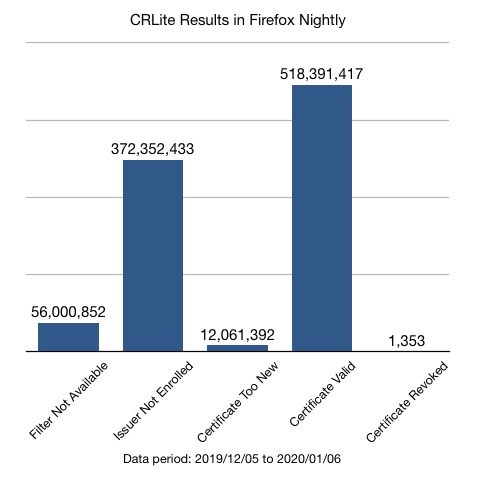

With Telemetry enabled in Firefox Nightly, each invocation of CRLite emits one of these results:

- Certificate Valid, indicating that CRLite authoritatively returned that the certificate was valid.

- Certificate Revoked, indicating that CRLite authoritatively returned that the certificate was revoked.

- Issuer Not Enrolled, meaning the certificate being evaluated wasn’t included in the CRLite filter set, likely because the issuing Certificate Authority (CA) did not publish CRLs.

- Certificate Too New, meaning the certificate being evaluated was newer than the CRLite filter.

- Filter Not Available, meaning that the CRLite filter either had not yet been downloaded from Remote Settings, or had become so stale as to be out-of-service.

Immediately, one sees that over 54% of secure connections (500M) could have benefited from the improved privacy and performance of CRLite for Firefox Nightly users.

Of the other data:

- We plan to publish updates up to 4 times per day, which will reduce the incidence of the Certificate Too New result.

- The Filter Not Available bucket correlates well with independent telemetry indicating a higher-than-expected level of download issues retrieving CRLite filters from Remote Settings; work to improve that is underway.

- Certificates Revoked but used actively on the Web PKI are, and should be, rare. This number is in-line with other Firefox Nightly telemetry for TLS connection results.

How Much Faster is CRLite?

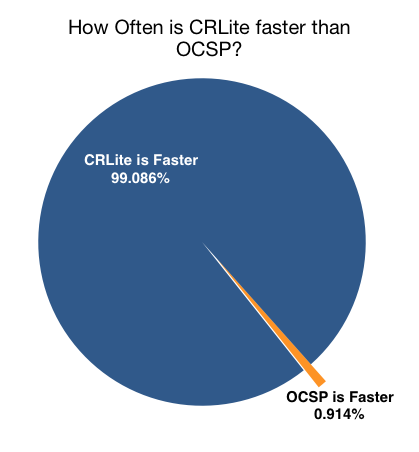

In contrast to OCSP which requires a network round-trip to complete before a web page can load, CRLite needs only to perform a handful of hash calculations and memory or disk lookups. We expected that CRLite would generally outperform OCSP, but to confirm we added measurements and let OCSP and CRLite race each other in Firefox Nightly.

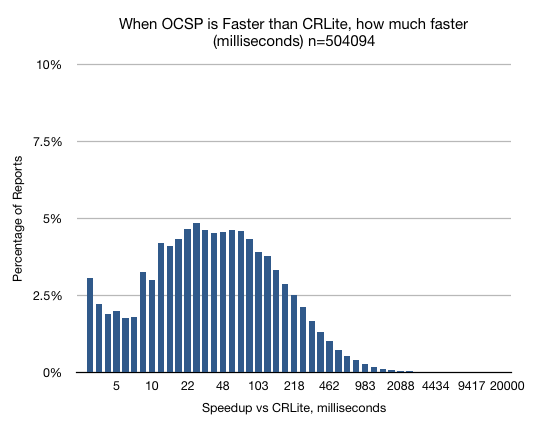

Over the month of data, CRLite was faster to query than OCSP 98.844% of the time.

CRLite is Faster 99% of the Time

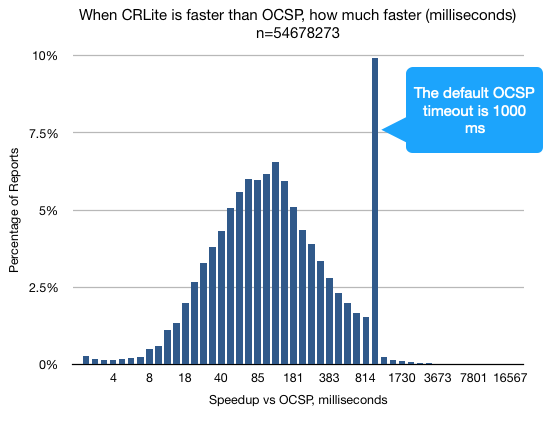

The speedup of CRLite versus OCSP was rather stark; 56% of the time, CRLite was over 100 milliseconds faster than OCSP, which is a substantial and perceptible improvement in browsing performance.

Figure 3: Distribution of occurrences where CRLite outperformed OCSP, which was 99% of CRLite operations. [source]

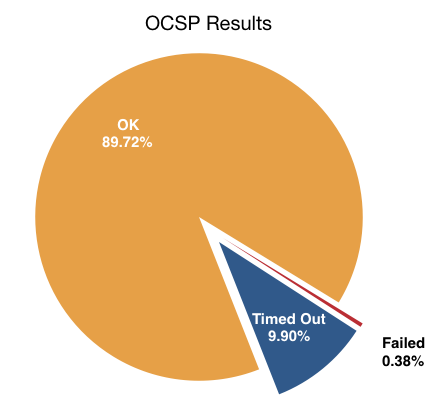

To verify that outlier at the timeout, our OCSP telemetry probe shows that over the same period, 9.9% of OCSP queries timed out:

Figure 4: Results of Live OCSP queries in Firefox Nightly [source]

The 1% When OCSP is Faster

The 500k times that OCSP was faster than CRLite, it was generally not much faster: 50% of these occasions it was less than 40 milliseconds faster. Only 20% of the occasions found OCSP 100 milliseconds faster.

Figure 5: Distribution of occurrences where OCSP outperformed CRLite, which was 1% of CRLite operations. [source]

Much Faster, More Private, and Still Secure

Our study confirmed that CRLite will maintain the integrity of our live revocation checking mechanisms while also speeding up TLS connections.

At this point it’s clear that CRLite lets us keep checking certificate revocations in the Web PKI without compromising on speed, and the remaining areas for improvement are on shrinking our update files closer to the ideal described in the original CRLite paper.

In the upcoming Part 4 of this series, we’ll discuss the architecture of the CRLite back-end infrastructure, the iteration from the initial research prototype, and interesting challenges of working in a “big data” context for the Web PKI.

In Part 5 of this series, we will discuss what we’re doing to make CRLite as robust and as safe as possible.

The post CRLite: Speeding Up Secure Browsing appeared first on Mozilla Security Blog.

https://blog.mozilla.org/security/2020/01/21/crlite-part-3-speeding-up-secure-browsing/

|

|

The Firefox Frontier: How to set Firefox as your default browser on Windows |

During a software update, your settings can sometimes change or revert back to their original state. For example, if your computer has multiple browsers installed, you may end up with … Read more

The post How to set Firefox as your default browser on Windows appeared first on The Firefox Frontier.

https://blog.mozilla.org/firefox/how-to-set-firefox-as-your-default-browser-on-windows/

|

|

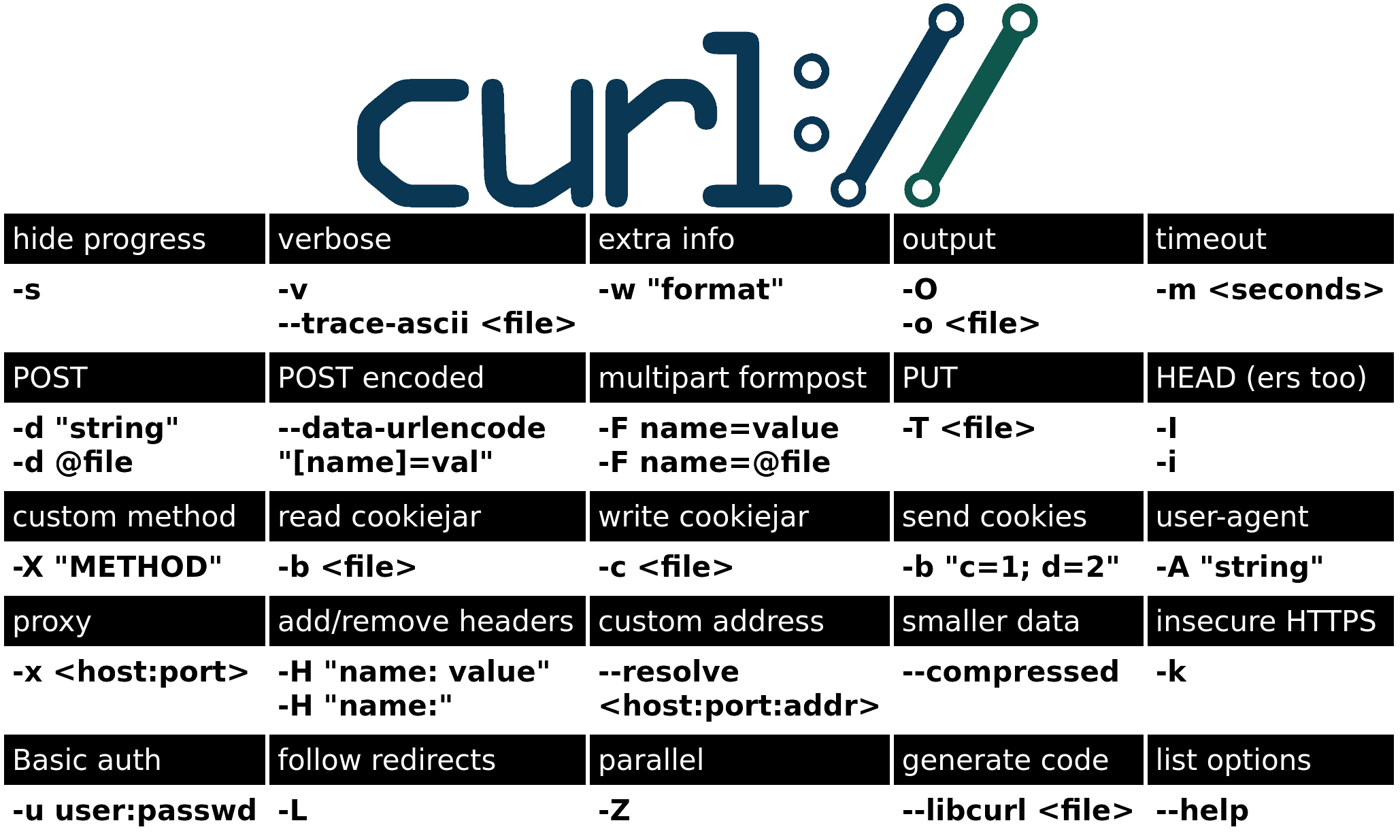

Daniel Stenberg: curl cheat sheet refresh |

Several years ago I made a first version of a “curl HTTP cheat sheet” to facilitate the most common curl command line options when working with HTTP.

This has now been refreshed after I took lots of feedback from friends on twitter, and then worked on rearranging the lines and columns so that it got as compact as possible without sacrificing readability (too much).

See the updated online version here.

The plan is to make stickers out of this – and possibly t-shirts as well. I did some test prints and deemed that with a 125 mm width, all the text is still clearly readable.

If things go well, I’ll hand out these beauties at curl up 2020 and of course leftovers will then be given away to whoever I run into at other places and conferences where I bring stickers…

https://daniel.haxx.se/blog/2020/01/20/curl-cheat-sheet-refresh/

|

|

Daniel Stenberg: curl ootw: -m, –max-time |

(Previous option on the week posts.)

This is the lowercase -m. The long form is called --max-time.

One of the oldest command line options in the curl tool box. It existed already in the first curl release ever: 4.0.

This option also requires a numerical argument. That argument can be an integer number or a decimal number, and it specifies the maximum time – in seconds – that you allow the entire transfer to take.

The intended use for this option is basically to provide a means for a user to stop a transfer that stalls or hangs for a long time due to whatever reason. Since transfers may sometimes be very quick and sometimes be ridiculously slow, often due to factors you cannot control or know about locally, figuring out a sensible maximum time value to use can be challenging.

Since -m covers the whole operation, even a slow name resolve phase can trigger the timeout if you set it too low. Setting a very low max-time value is almost guaranteed to occasionally abort otherwise perfectly fine transfers.

If the transfer is not completed within the given time frame, curl will return with error code 28.

Examples

Do the entire transfer within 10 seconds or fail:

curl -m 10 https://example.com/

Complete the download the file within 2.25 seconds or return error:

curl --max-time 2.25 https://example.com

Caveat

Due to restrictions in underlying system functions, some curl builds cannot specify -m values under one second.

Related

Related, and often better, options to use include --connect-timeout and the --speed-limit and --speed-time combo.

https://daniel.haxx.se/blog/2020/01/20/curl-ootw-m-max-time/

|

|

Karl Dubost: Week notes - 2020 w03 - worklog - Murphy |

Work

Basically, I spent most of the week dealing with coding the new workflow for anonymous bug reporting. And we (Guillaume, Ksenia, Mike and myself) made good progress. I believe we will be ready for Berlin All Hands.

Also GitHub decided to revive our anonymous bugs, around 39,000 bugs are back. We haven't yet reactivated our anonymous reporting.

Next week I will be working on the last bit that will allow us to restart the normal process.

Oh! Before I forget shout out to Ksenia. When I was reviewing one of her pull requests, I discovered and explored a couple of things I didn't know in the unittest.mock python module. That is one thing we forget too often, reviewing code is a way to learn cool stuff.

Friday, I let the code on the side and did a bit of diagnosis, so the pile of bugs doesn't grow insanely. Specifically with Berlin events coming.

Murphy

Also as Murphy's law put it, last week bad omens kept creeping into this week. My personal VMs just decided to not reboot, and basically I lost everything. This blog (Pelican driven with markdown files), personal blog and a couple of other sites, and my personal email server. So next week, I'll have to rebuild things during night. I have backups of blog content, and emails are under imap on my local machine. The joy of having everything as static content is making things a lot simpler.

So it's why this post is published later than sooner.

Mozilla lay-offs

Let's address the elephant in the room.

By now, everyone knows the sad news. 70 persons that you met, crossed path, worked with. 70 persons who shared a desire to make a better place for the Web. My social upbringing (fight for your workers rights) is making things difficult. I find the outpouring of help wonderful, but at the same time, I can't help myself and think that there would not be at #WalmartLifeBoat type of support for people who need to find jobs to not be on the street the day after. The value of Syndicats de salari'es (loosely translated by Trade unions in USA, but really with a complete different culture) and the laws protectings one's job in France are essential. Every day working in a North American job culture makes you realize this.

All the best to my coworkers who were forced to leave. It's always a sad moment. Every individual circumstance is unique, emotionally, economically, etc. and if I can do anything, tell me.

Otsukare!

|

|

Karl Dubost: Week notes - 2020 w02 - worklog - Fixing the mess |

What a week!

webcompat.com: Project belt-on.

So last week, on Friday (Japanese time), I woke up with a website being half disabled and then completely disabled. We had been banned by GitHub because of illegal content we failed to flag early enough. And GitHub did what they should do.

Oh… and last but not least… mike asked me what Belt-on meant. I guess so let's make it more explicit.

Now we need to fix our own mess, that will take a series of steps. Some of them are already taken.

- Right now, anonymous reporting is disabled. And it will not come back as it was. Done

- The only way to allow anonymous reporting again would be to switch to a moderation first model, then make it public once it has been made properly reviewed. ToDo

- We also probably need to remove inline screenshot images. Currently the images are shown directly to users. On Webcompat.com, our nsfw tag blurs images, but this has no effect on github obviously as we do not control the CSS there. ToDo

- Remove illegal content. Done

- Discussions have started on how we need to handle the future workflow for anonymous bug report.

- Created a diagram for a solution which doesn't involve URL redirections in case of moderation.

Berlin All Hands

- Berlin All Hands will be a very interesting work week for the Webcompat team with probably a lot of new things to think and probably for the best of the webcompat.com experience. Incidents can become the source of improvements.

Misc

- The list of 2020Q1 OKRs has been started.

- Publish some notes about reporting on webcompat.com to help me figuring out a plan on the new anonymous report workflow, aka stating the obvious.

- A bit of diagnosis on Thursday and Friday.

- I might have discovered a bug in the Responsive Design Mode related to HTTP redirection and User Agent String.

- This will be published later, because Murphy hits again and my own server is also down.

Reading

- Interesting read about making the meta viewport the default value. I wonder which breakage impact it could have on Web compatibility. Some websites dynamically test if a

meta viewportis already available in the markup and insert it or not dynamically based on user agent strings or screen sizes. Thomas reminded me of the discussion going on about @viewport - Release Notes for Safari Technology Preview 98

Otsukare!

|

|

Mike Hommey: Announcing git-cinnabar 0.5.3 |

Git-cinnabar is a git remote helper to interact with mercurial repositories. It allows to clone, pull and push from/to mercurial remote repositories, using git.

These release notes are also available on the git-cinnabar wiki.

What’s new since 0.5.2?

- Updated git to 2.25.0 for the helper.

- Fixed small memory leaks.

- Combinations of remote ref styles are now allowed.

- Added a

git cinnabar unbundlecommand that allows to import a mercurial bundle. - Experimental support for python >= 3.5.

- Fixed erroneous behavior of

git cinnabar {hg2git,git2gh}with some forms of abbreviated SHA1s. - Fixed handling of the

GIT_SSHenvironment variable. - Don’t eliminate closed tips when they are the only head of a branch.

- Better handle manifests with double slashes created by

hg convertfrom Mercurial < 2.0.1, and the following updates to those paths with normal Mercurial operations. - Fix compatibility with Mercurial libraries >= 3.0, < 3.4.

- Windows helper is now statically linked against libcurl.

|

|

Armen Zambrano: This post focuses on the work I accomplished as part of the Treeherder team during the last half… |

This post focuses on the work I accomplished as part of the Treeherder team during the last half of last year.

Some work I already blogged about:

Deprecated the taskcluster-treeherder service

The Taskcluster team requested that we stop ingesting tasks from the taskcluster-treeherder service and instead use the official Taskcluster Pulse exchanges (see work in bug 1395254). This required rewriting their code from Javascript to Python and integrate it into the Treeherder project. This needed to be accomplished by July since the Taskcluster migration would leave the service in an old set up without much support. Integrating this service into Treeherder gives us more control over all Pulse messages getting into our ingestion pipeline without an intermediary service. The project was accomplished ahead of the timeline. The impact is that the Taskcluster team had one less system to worry about ahead of their GCP migration.

Create performance dashboard for AWSY

I September I created a performance dashboard for the Are We Slim Yet project (see work in bug 1550235). This was not complicated because all I had to do was to branch off the existing Firefox performance dashboard I wrote last year and deploy it on a different Netlify app.

One thing I did not do at the time is to create different configs between the two branches. This would make it easier to merge changes from the master branch to the awsy branch without conflicts.

On the bright side, Divyanshu came along and fixed the issue! We can now use an env variable to start AWFY & another for AWSY. No need for two different branches!

Miscellaneous

Other work I did was to create a compare_pushes.py script that allows you to compare two different instances of Treeherder to determine if the ingestion of jobs for a push is different.

I added a management command to ingest all Taskcluster tasks from a push, an individual task/push or all tasks associated to a Github PR. Up until this point, the only way to ingest this data would be by having the data ingestion pipeline set up locally before those tasks started showing up in the Pulse exchanges. This script is extremely useful for local development since you can test ingesting data with precise control and having

I added Javascript code coverage for Treeherder through codecov.io and we have managed not to regress farther since then. This was in response that most code backouts for Treeherder were due to frontend regressions. Being able to be notified if we are regressing is useful to adjust tests appropriately.

I maintain a regular schedule (twice a week) to release Treeherder production deployments. This guarantees that Treeherder would not have too many changes being deployed to production all at once (I remember the odd day when we had gone 3 weeks without any code being promoted).

I helped with planning the work for Treeherder for H2 of this year, H1 next year and I helped the CI-A’s planning for 2020.

I automated merging Javascript dependencies updates for the Treeherder.

I created a process to share secrets safely within the team instead of using a Google Doc.

|

|

David Teller: Units of Measure in Rust with Refinement Types |

Years ago, Andrew Kennedy published a foundational paper about a type checker for units of measure, and later implemented it for F#. To this day, F# is the only mainstream programming language which provides first class support to make sure that you will not accidentally confuse meters and feet, euros and dollars, but that you can still convert between watts·hours and joules.

I decided to see whether this could be implemented in and for Rust. The answer is not only yes, but it was fun :)

|

|

Mozilla Future Releases Blog: A brand new browsing experience arrives in Firefox for Android Nightly |

It’s been almost 9 years since we released the first Firefox for Android. Hundreds of millions of users have tried it and over time provided us with valuable feedback that allowed us to continuously improve the app, bringing more features to our users that increase their privacy and make their mobile lives easier. Now we’re starting a new chapter of the Firefox experience on Android devices.

Testing to meet the users’ needs

Back in school, most of us weren’t into tests. They were stressful and we’d rather be playing or hanging out with friends. As adults, however, we see the value of testing — especially when it comes to software: testing ensures that we roll out well-designed products to a wide audience that deliver on their intended purposes.

At Firefox, we have our users at heart, and the value our products provide to them is at the center of everything we do. That’s why we test a lot. It’s why we make our products available as Nightly (an early version for developers) and Beta versions (a more stable preview of a new piece of software), put the Test Pilot program in place and sometimes, when we enter entirely new territory, we add yet another layer of user testing. It’s exactly that spirit that motivated us to launch Firefox Preview Beta in June 2019. Now we’re ready for the next step.

A new Firefox for Android: the making-of

When we started working on this project, we wanted to create a better Firefox for Android that would be faster, more reliable, and able to address today’s user problems. Plus, we wanted it to be based on our own mobile browser engine GeckoView in order to offer the highest level of privacy and security available on the Android platform. In short: we wanted to make sure that our users would never have to choose between privacy and a great browsing experience.

We had an initial idea of what that new Android product would look like, backed up by previous user research. And we were eager to test it, see how users feel about it, and find out what changes we needed to make and adjust accordingly. To minimize user disruption, early versions of this next generation browser were offered to early adopters as a separate application called Firefox Preview.

In order to ensure a fast, efficient and streamlined user experience, we spent the last couple of months narrowing down on what problems our users wanted us to solve, iterating on how we built and surfaced features to them. We looked closely at usage behaviour and user feedback to determine whether our previous assumptions had been correct and where changes would be necessary.

The feedback from our early adopters was overwhelmingly positive: the Firefox Preview Beta users loved the app’s fresh modern looks and the noticeably faster browsing experience due to GeckoView as well as new UI elements, such as the bottom navigation bar. When it came to tracking protection, we learned that Android users prefer a browsing experience with a more strict protection and less distractions — that’s why we made Strict Mode the default in Firefox Preview Beta, while Firefox for Desktop comes with Standard Mode.

Firefox Preview Beta goes Nightly

Based on the previous 6 months of user testing and the positive feedback we have received, we’re confident that Android users will appreciate this new browsing experience and we’re very happy to announce that, as of Tuesday (January 21, 2020), we’re starting to roll it out to our existing Firefox for Android audience in the Nightly app. For current Nightly users, it’ll feel like a big exciting upgrade of their browsing experience once they update the app, either manually or automatically, depending on their preset update routine. New users can easily download Firefox Preview here.

As for next milestones, the brand new Firefox for Android will go into Beta in Spring 2020 and land in the main release later in the first half of this year. In the meantime, we’re looking forward to learning more about the wider user group’s perception of the new Firefox for Android as well as to more direct feedback, allowing us to deliver the best-in-class mobile experience that our users deserve.

The post A brand new browsing experience arrives in Firefox for Android Nightly appeared first on Future Releases.

|

|

Daniel Stenberg: You’re invited to curl up 2020: Berlin |

The annual curl developers conference, curl up, is being held in Berlin this year; May 9-10 2020.

Starting now, you can register to the event to be sure that you have a seat. The number of available seats is limited.

curl up is the main (and only?) event of the year where curl developers and enthusiasts get together physically in a room for a full weekend of presentations and discussions on topics that are centered around curl and its related technologies.

We move the event around to different countries every year to accommodate different crowds better and worse every year – and this time we’re back again in Germany – where we once started the curl up series back in 2017.

The events are typically small with a very friendly spirit. 20-30 persons

Sign up

We will only be able to let you in if you have registered – and received a confirmation. There’s no fee – but if you register and don’t show, you lose karma.

The curl project can even help fund your travel and accommodation expenses (if you qualify). We really want curl developers to come!

Date

May 9-10 2020. We’ll run the event both days of that weekend.

Agenda

The program is not done yet and will not be so until just a week or two before the event, and then it will be made available => here.

We want as many voices as possible to be heard at the event. If you have done something with curl, want to do something with curl, have a suggestion etc – even just short talk will be much appreciated. Or if you have a certain topic or subject you really want someone else to speak about, let us know as well!

Expect topics to be about curl, curl internals, Internet protocols, how to improve curl, what to not do in curl and similar.

Location

We’ll be in the co.up facilities in central Berlin. On Adalbertstrasse 8.

Planning and discussions on curl-meet

Everything about the event, planning for it, volunteering, setting it up, agenda bashing and more will be done on the curl-meet mailing list, dedicated for this purpose. Join in!

Anti Google?

If you have a problem with filling in the Google-hosted registration form, please email us at curlup@haxx.se instead and we’ll ask you for the information over email instead.

Credits

The images were taken by me, Daniel. The top one at the Nuremburg curl up in 2017, the in-article photo in Stockholm 2018.

https://daniel.haxx.se/blog/2020/01/16/youre-invited-to-curl-up-2020-berlin/

|

|

Nick Fitzgerald: Announcing Better Support for Fuzzing with Structured Inputs in Rust |

|

|

David Teller: Layoff survival guide |

If you’re reading these lines, you may have recently been laid off from your job. Or maybe, depending on your country and its laws, you’re waiting to know if you’re being laid off.

Well, I’ve been there and I’ve survived it, so, based on my experience, here are a few suggestions:

|

|

The Mozilla Blog: Readying for the Future at Mozilla |

Mozilla must do two things in this era: Continue to excel at our current work, while we innovate in the areas most likely to impact the state of the internet and internet life. From security and privacy network architecture to the surveillance economy, artificial intelligence, identity systems, control over our data, decentralized web and content discovery and disinformation — Mozilla has a critical role to play in helping to create product solutions that address the challenges in these spaces.

Creating the new products we need to change the future requires us to do things differently, including allocating resources for this purpose. We’re making a significant investment to fund innovation. In order to do that responsibly, we’ve also had to make some difficult choices which led to the elimination of roles at Mozilla which we announced internally today.

Mozilla has a strong line of sight on future revenue generation from our core business. In some ways, this makes this action harder, and we are deeply distressed about the effect on our colleagues. However, to responsibly make additional investments in innovation to improve the internet, we can and must work within the limits of our core finances.

We make these hard choices because online life must be better than it is today. We must improve the impact of today’s technology. We must ensure that the tech of tomorrow is built in ways that respect people and their privacy, and give them real independence and meaningful control. Mozilla exists to meet these challenges.

The post Readying for the Future at Mozilla appeared first on The Mozilla Blog.

https://blog.mozilla.org/blog/2020/01/15/readying-for-the-future-at-mozilla/

|

|

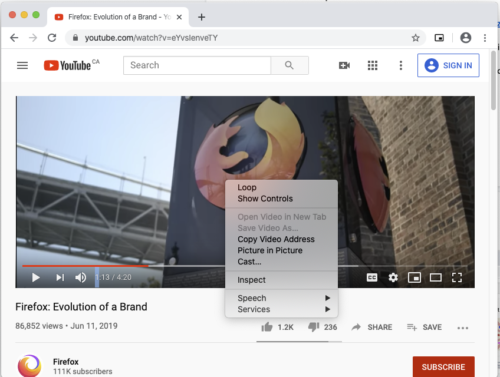

Hacks.Mozilla.Org: How we built Picture-in-Picture in Firefox Desktop with more control over video |

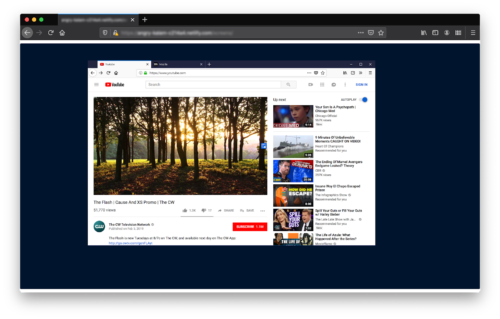

Picture-in-Picture support for videos is a feature that we shipped to Firefox Desktop users in version 71 for Windows users, and 72 for macOS and Linux users. It allows the user to pull a element out into an always-on-top window, so that they can switch tabs or applications, and keep the video within sight — ideal if, for example, you want to keep an eye on that sports game while also getting some work done.

As always, we designed and developed this feature with user agency in mind. Specifically, we wanted to make it extremely easy for our users to exercise greater control over how they watch video content in Firefox.

In these next few sections, we’ll talk about how we designed the feature and then we’ll go deeper into details of the implementation.

The design process

Look behind and all around

To begin our design process, we looked back at the past. In 2018, we graduated Min-Vid, one of our Test Pilot experiments. We asked the question: “How might we maximize the learning from Min-Vid?“. Thanks to the amazing Firefox User Research team, we had enough prior research to understand the main pain points in the user experience. However, it was important to acknowledge that the competitive landscape had changed quite a bit since 2018. How were users and other browsers solving this problem already? What did users think about those solutions, and how could we improve upon them?

We had two essential guiding principles from the beginning:

- We wanted to turn this into a very user-centric feature, and make it available for any type of video content on the web. That meant that implementing the Picture-in-Picture spec wasn’t an option, as it requires developers to opt-in first.

- Given that it would be available on any video content, the feature needed to be discoverable and straight-forward for as many people as possible.

Keeping these principles in mind helped us to evaluate all the different solutions, and was critical for the next phase.

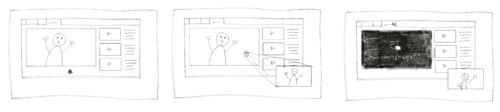

Try, and try again

Once we had an understanding of how others were solving the problem, it was our turn to try. We wanted to ensure discoverability without making the feature intrusive or annoying. Ultimately, we wanted to augment — and not disrupt — the experience of viewing video. And we definitely didn’t want to cause issues with any of the popular video players or platforms.

This led us to building an interactive, motion-based prototype using Framer X. Our prototype provided a very effective way to get early feedback from real users. In tests, we didn’t focus solely on usability and discoverability. We also took the time to re-learn the problems users are facing. And we learned a lot!

The participants in our first study appreciated the feature, and while it did solve a problem for them, it was too hard to discover on their own.

So, we rolled our sleeves up and tried again. We knew what we were going after, and we now had a better understanding of users’ basic expectations. We explored, brainstormed solutions, and discussed technical limitations until we had a version that offered discoverability without being intrusive. After that, we spent months polishing and refining the final experience!

Stay tuned

From the beginning, our users have been part of the conversation. Early and ongoing user feedback is a critical aspect of product design. It was particularly exciting to keep Picture-in-Picture in our Beta channel as we engaged with users like you to get your input.

We listened, and you helped us uncover new blind spots we might have missed while designing and developing. At every phase of this design process, you’ve been there. And you still are. Thank you!

Implementation detail

The Firefox Picture-in-Picture toggle exists in the same privileged shadow DOM space within the controls. Because this part of the DOM is inaccessible to page JavaScript and CSS stylesheets, it is much more difficult for sites to detect, disable, or hijack the feature.

Into the shadow DOM

Early on, however, we faced a challenge when making the toggle visible on hover. Sites commonly structure their DOM such that mouse events never reach a that the user is watching.

Often, websites place transparent nodes directly over top of elements. These can be used to show a preview image of the underlying video before it begins, or to serve an interstitial advertisement. Sometimes transparent nodes are used for things that only become visible when the user hovers the player — for example, custom player controls. In configurations like this, transparent nodes prevent the underlying from matching the :hover pseudo-class.

Other times, sites make it explicit that they don’t want the underlying to receive mouse events. To do this, they set the pointer-events CSS property to none on the or one of its ancestors.

To work around these problems, we rely on the fact that the web page is being sent events from the browser engine. At Firefox, we control the browser engine! Before sending out a mouse event, we can check to see what sort of DOM nodes are directly underneath the cursor (re-using much of the same code that powers the elementsFromPoint function).

If any of those DOM nodes are a visible , we tell that that it is being hovered, which shows the toggle. Likewise, we use a similar technique to determine if the user is clicking on the toggle.

We also use some simple heuristics based on the size, length, and type of video to determine if the toggle should be displayed at all. In this way, we avoid showing the toggle in cases where it would likely be more annoying than not.

A browser window within a browser

The Picture-in-Picture player window itself is a browser window with most of the surrounding window decoration collapsed. Flags tell the operating system to keep it on top. That browser window contains a special element that runs in the same process as the originating tab. The element knows how to show the frames that were destined for the original . As with much of the Firefox browser UI, the Picture-in-Picture player window is written in HTML and powered by JavaScript and CSS.

Other browser implementations

Firefox is not the first desktop browser to ship a Picture-in-Picture implementation. Safari 10 on macOS Sierra shipped with this feature in 2016, and Chrome followed in late 2018 with Chrome 71.

In fact, each browser maker’s implementation is slightly different. In the next few sections we’ll compare Safari and Chrome to Firefox.

Safari

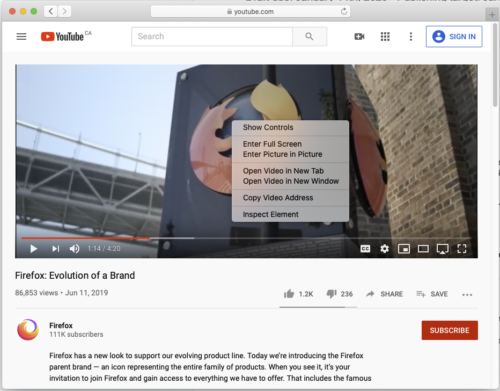

Safari’s implementation involves a non-standard WebAPI on elements. Sites that know the user is running Safari can call video.webkitSetPresentationMode("picture-in-picture"); to send a video into the native macOS Picture-in-Picture window.

Safari includes a context menu item for elements to open them in the Picture-in-Picture window. Unfortunately, this requires an awkward double right-click to access video on sites like YouTube that override the default context menu. This awkwardness is shared with all browsers that implement the context menu option, including Firefox.

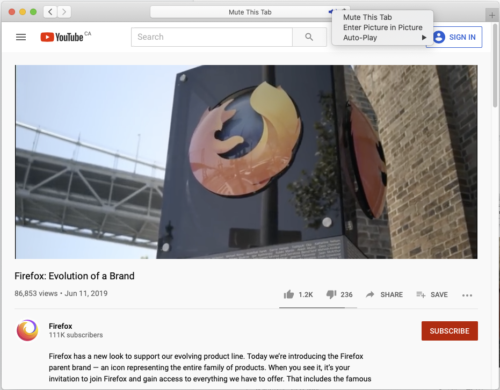

Safari users can also right-click on the audio indicator in the address bar or the tab strip to trigger Picture-in-Picture:

On newer MacBooks, Safari users might also notice the button immediately to the right of the volume-slider. You can use this button to open the currently playing video in the Picture-in-Picture window:

Safari also uses the built-in macOS Picture-in-Picture API, which delivers a very smooth integration with the rest of the operating system.

Comparison to Firefox

Despite this, we think Firefox’s approach has some advantages:

- When multiple videos are playing at the same time, the Safari implementation is somewhat ambiguous as to which video will be selected when using the audio indicator. It seems to be the most recently focused video, but this isn’t immediately obvious. Firefox’s Picture-in-Picture toggle makes it extremely obvious which video is being placed in the Picture-in-Picture window.

- Safari appears to have an arbitrary limitation on how large a user can make their Picture-in-Picture player window. Firefox’s player window does not have this limitation.

- There can only be one Picture-in-Picture window system-wide on macOS. If Safari is showing a video in Picture-in-Picture, and then another application calls into the macOS Picture-in-Picture API, the Safari video will close. Firefox’s window is Firefox-specific. It will stay open even if another application calls the macOS Picture-in-Picture API.

Chrome’s implementation

The PiP WebAPI and WebExtension

Chrome’s implementation of Picture-in-Picture mainly centers around a WebAPI specification being driven by Google. This API is currently going through the W3C standardization process. Superficially, this WebAPI is similar to the Fullscreen WebAPI. In response to user input (like clicking on a button), site authors can request that a be put into a Picture-in-Picture window.

Like Safari, Chrome also includes a context menu option for elements to open in a Picture-in-Picture window.

This proposed WebAPI is also used by a PiP WebExtension from Google. The extension adds a toolbar button. The button finds the largest video on the page, and uses the WebAPI to open that video in a Picture-in-Picture window.

Google’s WebAPI lets sites indicate that a should not be openable in a Picture-in-Picture player window. When Chrome sees this directive, it doesn’t show the context menu item for Picture-in-Picture on the , and the WebExtension ignores it. The user is unable to bypass this restriction unless they modify the DOM to remove the directive.

Comparison to Firefox

Firefox’s implementation has a number of distinct advantages over Chrome’s approach:

- The Chrome WebExtension which only targets the largest

on the page. In contrast, the Picture-in-Picture toggle in Firefox makes it easy to choose anyon a site to open in a Picture-in-Picture window. - Users have access to this capability on all sites right now. Web developers and site maintainers do not need to develop, test and deploy usage of the new WebAPI. This is particularly important for older sites that are not actively maintained.

- Like Safari, Chrome seems to have an artificial limitation on how big the Picture-in-Picture player window can be made by the user. Firefox’s player window does not have this limitation.

- Firefox users have access to this Picture-in-Picture capability on all sites. Websites are not able to directly disable it via a WebAPI. This creates a more consistent experience for

elements across the entire web, and ultimately more user control.

Recently, Mozilla indicated that we plan to defer implementation of the WebAPI that Google has proposed. We want to see if the built-in capability we just shipped will meet the needs of our users. In the meantime, we’ll monitor the evolution of the WebAPI spec and may revisit our implementation decision in the future.

Future plans

Now that we’ve shipped the first version of Picture-in-Picture in Firefox Desktop on all platforms, we’re paying close attention to user feedback and bug intake. Your inputs will help determine our next steps.

Beyond bug fixes, we’d like to share some of the things we’re considering for future feature work:

- Repositioning the toggle when there are visible, clickable elements overlapping it.

- Supporting video captions and subtitles in the player window.

- Adding a playhead scrubber to the player window to control the current playing position of a

. - Adding a control for the volume level of the

to the player window.

How are you using Picture-in-Picture?

Are you using the new Picture-in-Picture feature in Firefox? Are you finding it useful? Please us know in the comments section below, or send us a Tweet with a screenshot! We’d love to hear what you’re using it for. You can also file bugs for the feature here.

The post How we built Picture-in-Picture in Firefox Desktop with more control over video appeared first on Mozilla Hacks - the Web developer blog.

https://hacks.mozilla.org/2020/01/how-we-built-picture-in-picture-in-firefox-desktop/

|

|